Because of Celebrating World Accessibility Awareness Day, Apple has advanced some of the technologies it is working on in this regard, and that will reach its various devices throughout this year. And in view of the Apple published by those from Cupertino, it is quite clear that they have taken it very seriously, with a plethora of the most interesting proposals, and that will undoubtedly be appreciated by many users.

The most remarkable of what Apple announced today is, without a doubt, a new live subtitles feature, coming to iOS, ipadOS, and macOS, especially aimed at people with hearing disabilities. Its function, as you may have already imagined, is to convert voice into text in real time, and it can be used in multiple contexts, whether you are in a phone call or FaceTime, using a videoconference service or social networks, transmitting multimedia content or even talking to someone next to you.

The subtitles generated by this new system distinguish between the different voices, in case there is more than one interlocutor, and will clarify which of them each transcript corresponds to. In addition, in the case of using Live Captions for calls on a Mac, the system will also offer the reverse function to the user, that is, they will be able to write a response, and the text-to-speech technology will “read” it to the person(s). people who are participating in the call.

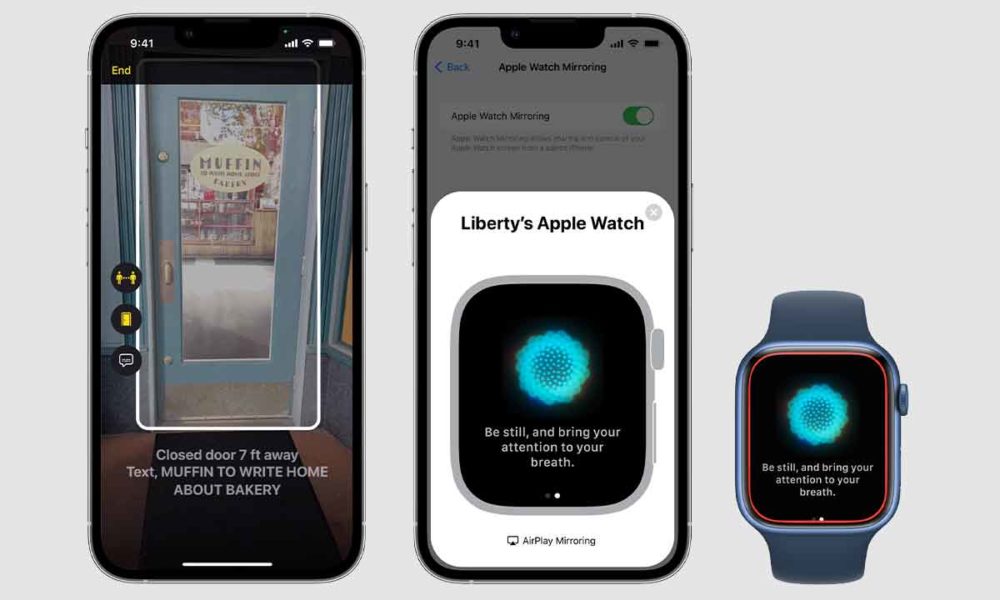

Another very interesting function, this one for users of Apple devices that have a LiDAR sensor, is an assistance system for the blind and visually impaired. The sensor, in combination with the camera and machine learning functions is able to identify the doors, warning the user that he is approaching one of them. The assistant will indicate its distance, if it is open or closed, if it has a handle, knob or handle and if it opens by pulling or pushing. In addition, you will recognize and read the signs and symbols that are displayed around you.

For Apple Watch users there are also news, and that is The possibility of screen mirroring the clock screen on the iPhone screen will be enabled, thus facilitating its handling for people who, due to motor disabilities, have difficulties in being able to manipulate the Apple Watch directly. In this way, these users will be able to benefit from the multiple clock quantification functions, without having to deal with the complications that handling them can entail.

Also additional languages added to Voice Over, Apple’s technology for reading the content that is being displayed on the screen. Among the new languages added we find Catalan, Basque and Chilean Spanish. And for hearing-impaired users, the device will learn to recognize various sounds—from an alarm to a doorbell—to alert the user to them.

Without a doubt, Apple has done a great job, because These are just the most notable news about it. Now the key is to wait for their arrival, which we hope will generally happen as soon as possible, since they can make an important difference for people with various types of disabilities.