In the field of hardware, the more complex a representation is in terms of numbers of transistors to represent it, it is also complex to operate with it. That is why when we talk about a specific precision system, more precise numerical representation systems are used in computers when representing and operating said values in order to have the necessary precision.

Well, there are a series of applications, especially related to finance, electronic commerce and some web services that process data that require the precision of a unit that is capable of working directly with decimal values, either in floating point or in integers. And you may have guessed that due to the markets for these same applications we are talking about very specific sectors that use CPUs for servers.

What are the limitations of the binary system? If we take the conventional binary floating point system we will see that for example if we want to represent something as simple as 0.1 in binary we will need a recurring binary fraction that tends to infinity. On the other hand, if we do it with a decimal system we can represent it as a simple fraction of 1/10. Think for a moment what would happen in the financial market if due to lack of precision the numbers do not add up.

Binary and decimal on the same CPU

Pure decimal computing does not occur in processors today, not even at the server scale. This is due to the fact that the base in which a computer operates defines very well the way in which not only its execution units process data, but also how instructions are decoded and how the system’s RAM memory is accessed. In other words, we would be facing a completely different system and whose code would not be compatible at all.

That is why by standard the following rules have been assigned to combine the power to work in binary and decimal:

- The encoding of the instructions is always done in binary

- The data is stored in binary or decimal as required.

- Each data type in base or type, floating point or integer, is operated by a different type of unit.

- The memory addressing is always done in binary, to avoid access conflicts and that the CPU has to work with two different spaces.

This is done in order to have a universal control unit, in charge of capturing and decoding the instructions. At the end of the day what interests us is to be able to operate with numbers in base 10 and not how.

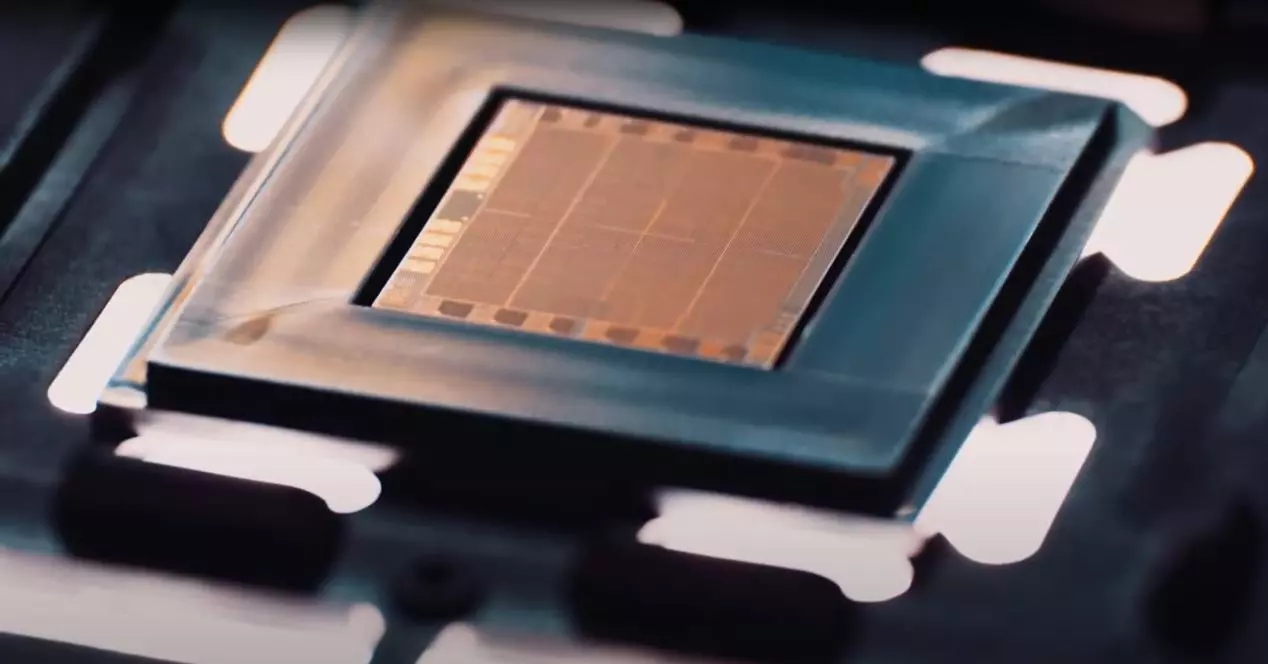

Little by little, the binary system began to be standardized in computer processors as it was the simplest and easiest to implement, since it required fewer transistors. This relegated the use of decimal computing to specialized units specifically designed to operate in such a way or to simple binary-to-decimal data conversion instructions.

In other words, computers can operate with decimal numbers and even more abstract concepts such as complex and imaginary numbers. Of course, making a processor that works in binary requires much less complications than making a decimal from scratch. And it is that the best designed systems scale from the simplest, which is nothing other than working in binary.

Not available on tablets or PC

Decimal units have not been common in the PC CPUs that we use in our day-to-day lives, but in a large multitude of sectors it is necessary to use units capable of working in base 10 at high speed to perform their calculations. In fact, the first computers were designed to work with decimal numbers, since these were not yet digital, but analog, and they were the electronic versions of the first mechanical calculators.

Currently no CPU architecture with both ISA ARM and x86 have the hardware that allows them to operate natively with base 10 numbers, but they include some instructions that allow converting data written in decimal to binary with a trade-off and that is the loss of precision of data, so the x86 processors we use in our PCs are not valid for certain applications and certain markets.

How is this possible if many people on PC work with accounting applications on a daily basis, for example? The explanation is that the level of precision they require to work does not require a unit that operates directly with decimals and these applications make use of the conversion instructions in the ISA of both the PC and the tablet to do their work.

IBM is the queen of decimal computing

The oldest company in the hardware world is not Intel, but IBM. Historical company in the world of computing that has always had large contracts with the large banks of the United States since the 1950s. Since then, its mainframes with the ability to operate with decimal numbers carried accounting entries and various banking operations and financial, all of them operated on a base 10.

That’s why the original blue giant’s intended processors for large-scale business use include specialized units for decimal computing even today. Without going any further, the POWER processors in recent generations have included a unit with the ability to work with decimals, and without leaving the International Business Machines we have their System Z10 that make up a unit of this type.

So the banking and financial system of Europe and the United States has become an extremely lucrative market for the blue giant in recent decades, a case very similar to Fujitsu in Japan that with its ISA SP ARC processors, invented by Sun Microsystems, it has been the queen for years in the banks of the Japanese country and continues to be so today.