According to the publication, Google researchers were able to use a reinforcement learning technique to design the next generation of TPUs (Tensor Processing Units), Google’s specialized Artificial Intelligence processors. This means that the internet giant’s next AI chips will be designed by the firm’s previous generation of AI chips.

Artificial Intelligence that designs chips for AI

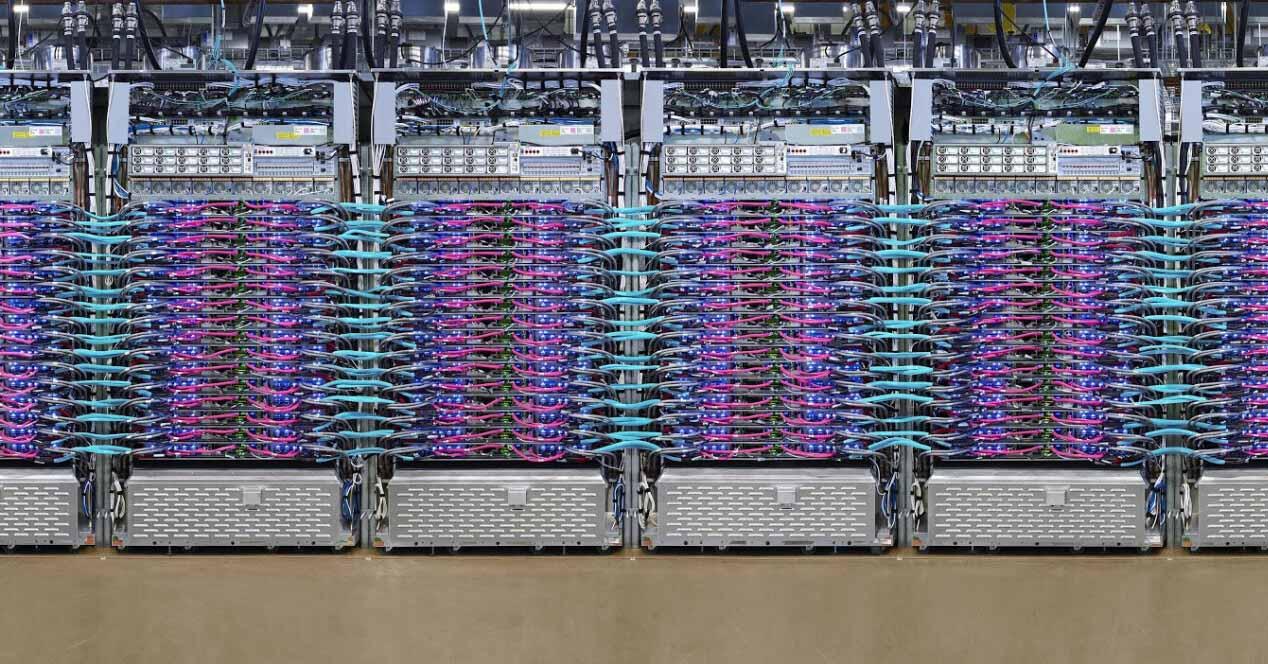

The use of software in chip design is not new, but according to Google researchers the new reinforcement learning model “automatically generates chip floor plans that are superior to or comparable to those produced by humans in all key metrics. , including power consumption, performance and chip area ”. And best of all, it does it in a fraction of the time it would take a human to do it.

The superiority of AI over human performance has attracted a lot of attention; one media outlet described it as “Artificial Intelligence software that can design chips faster than humans” and wrote that “a chip that would take humans months to design can be devised by Google’s AI in less than six hours.” . But reading the Nature article, what is surprising is not the complexity of the system used to design the chips, but the synergy between human and artificial intelligence.

Analogies, intuition and rewards

The document describes the problem as such: “Chip design planning involves placing lists of networks on chip canvases (two-dimensional grids) so that performance metrics (eg power consumption, time, area and length of the connections) are optimized, while adhering to strict restrictions on density and route congestion. ‘

Basically what you want to do is position the components in the most optimal way and yet, as in any other problem as the number of internal components of the chip increases, finding optimal layouts becomes more difficult. This is precisely what AI seems to have fixed, or at least mitigated.

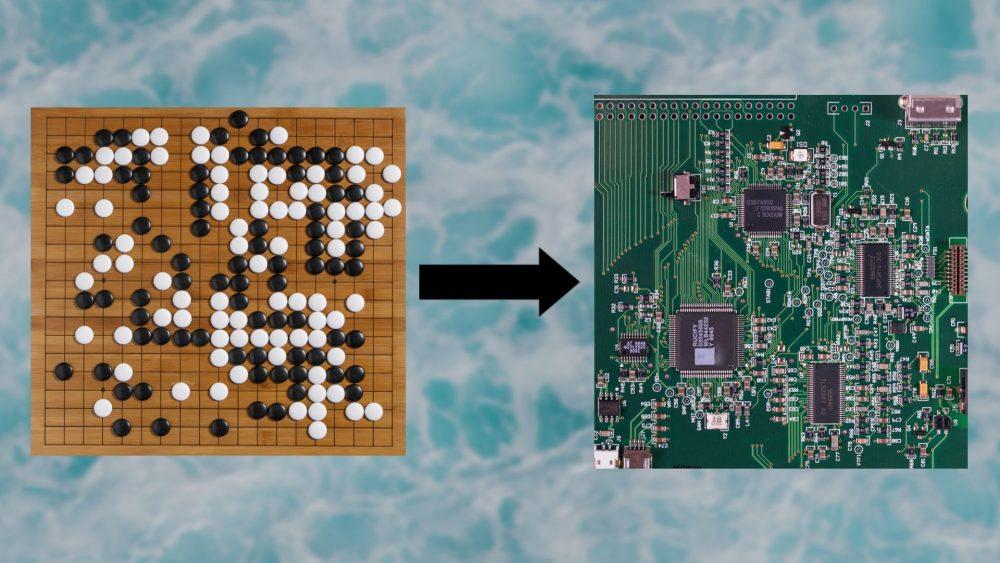

Existing software helps speed up the chip arrangement discovery process, but falls short as the chip becomes more complex. The researchers decided to draw experience from the way reinforcement learning has solved other complex problems, and this is the manifestation of one of the most important and complex aspects of human intelligence: the analogy.

Humans can extract abstractions from a problem that we solve and then apply them to other problems. While we take these skills for granted, they are what make us very good at transfer learning, and this is why the researchers were able to rethink the problem of chip design planning as if it were a board game.

Deep reinforcement learning models can be especially good at finding very large spaces, a feat that is physically impossible with the computing power of the brain. However, the scientists solved the problem with an artificial neural network that could encode chip designs as vector representations and made exploring the problem space much easier. According to the document, “our intuition was that in a policy capable of the general task of chip placement it should also be possible to encode the state associated with a new invisible chip into a significant signal at the time of inference. Therefore, we train a neural network architecture capable of predicting the reward in the placement of new network lists with the ultimate goal of using this architecture as the coding layer of our policy.

The term intuition is often used loosely, but it is actually a very complex and poorly understood process that involves experience, unconscious knowledge, pattern recognition, and many more factors. Our intuitions come from years of work in one field, but they can also be gained from experiences in other areas. Fortunately, putting these insights to the test is getting easier with the help of high-powered computing and machine learning tools.

It’s also worth noting that reinforcement learning systems need a well-designed reward. In fact, some scientists believe that with the proper reward function, reinforcement learning is sufficient to catch up with general artificial intelligence, and yet without the proper reward an RL agent can get stuck in endless loops.