The biggest problem with the performance of any processor today is its distance from RAM, for two clear reasons: access speed and power consumption in data transmission. So the best thing is to have a buffer memory and within the CPU itself, which we call cache, that takes fragments of RAM that are necessary at all times, but unfortunately the space available in a central processing unit is limited.

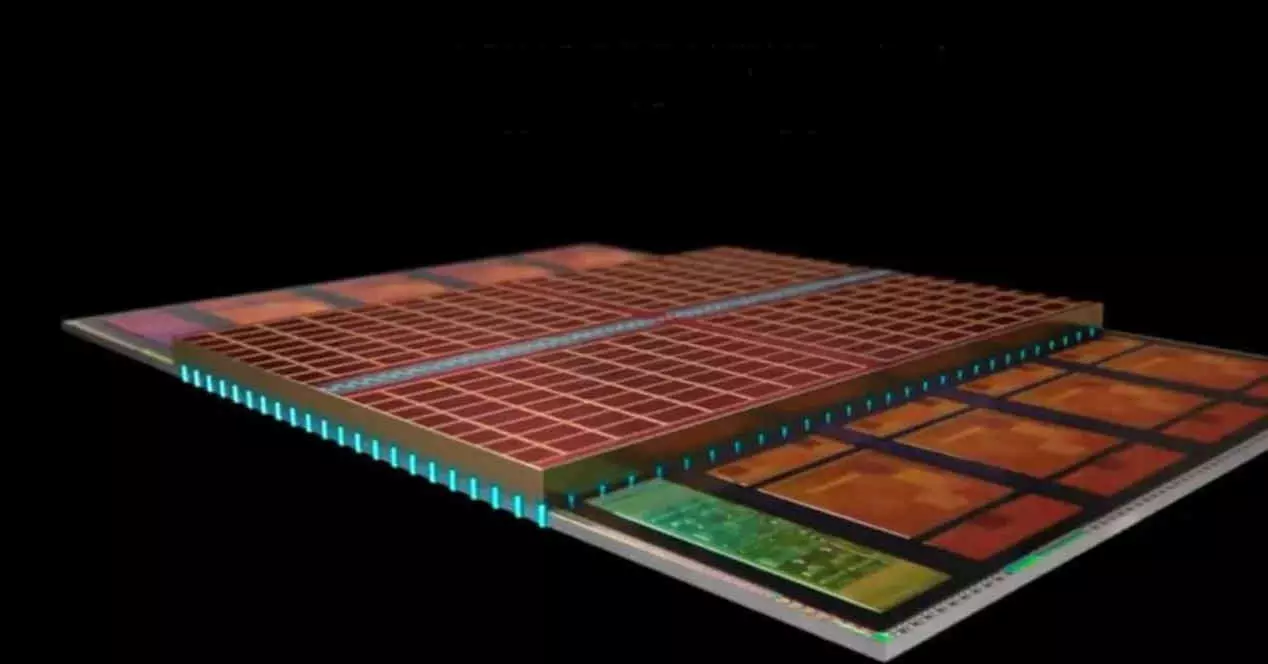

AMD’s so-called V-Cache is nothing more than a way to extend the L3 cache of the Zen 3 cores beyond its physical limit by installing it above the CCD and using pathways through silicon for interconnection. Although at the moment we have not seen this extension of the cache applied to the company’s APUs. For what is this?

Is it possible that we will see AMD’s V-Cache in their future APUs?

The idea of placing memory in 3D is not new, we already saw it a few years ago in the SoCs of some smartphones with the interface WideIO, but this was in order to place the RAM on top of the processor. On the other hand, the proposal of those of Lisa Su is based on increasing the capacity of the last level cache for the CPU and this is where the fine print comes in. If we had to give an answer to the question that heads this section of the article that you are now reading, then it would be a simple and concise yes, but as we say this has small print.

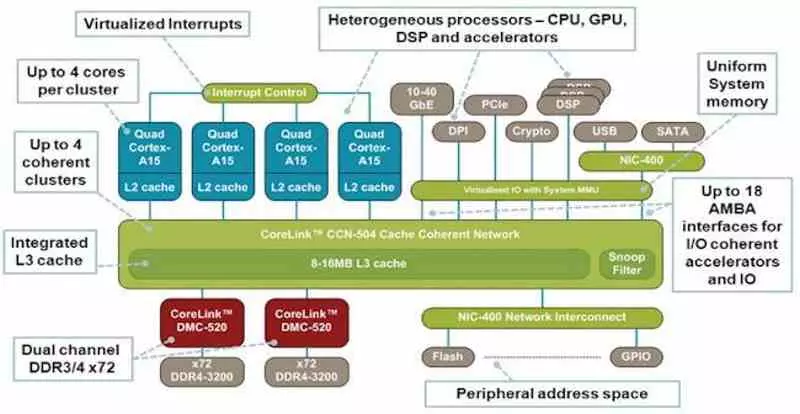

In an APU or a SoC, words for the same type of processor, we have several heterogeneous cores, and therefore not the same, that communicate through the same intercommunication mechanism with a single well of RAM memory at the physical level. This means that if we put the V-Cache in a unit of this type it would not work the same as how the CCD Chiplet of Zen 3 does. In short, the V-Cache in a future AMD APU would not be just the last cache. level for the CPU that it will incorporate, but also for the GPU and it would be a common point for both.

The fact that there is a level of cache beyond the cores, but before the memory controller or in this one, should not surprise us, since this is something that is common in the processors for smartphones to communicate the different components in the SoC. The idea is that if going to RAM to consult a data left by a core is energy expensive then it makes more sense to do it in an internal memory in which the different components can access and from there to create an additional cache level.

In addition, the V-Cache despite being a memory well physically shared between CPU and GPU can have an internal addressing divided into two parts: one with memory coherence with the CPU and another without for exclusive use of the GPU and its accelerators that it would obviously not be consistent with the central processing unit. This would be a way to bring the advantages of AMD’s own Infinity Cache from dedicated GPUs to embedded ones.