The popularity of ChatGPT, the chatbot created by OpenAI and which It has been the spur for big technology companies to accelerate their plans to incorporate generative artificial intelligence into their products and services.. The new Bing, the long-awaited Bard, applications with co-pilot mode… 2023 is being the year of generative AI, and we still haven’t said goodbye to March. Probably during the next few months we will see a certain slowdown, as it seems very difficult to maintain the pace of this first quarter, but even so we can expect new developments and interesting announcements in this regard.

The value that artificial intelligence can bring in many contexts is exceptional. From tasks as simple as helping us better manage our email communications to substantially speeding up medical research, its reach aims to be nearly universal, and proper implementation of it could be tremendously beneficial in many ways.

There are, however, well-founded fears about the potential negative consequences of artificial intelligence. Just yesterday we shared a reflection on the matter, following the open letter signed by more than 1,000 science and technology professionals. A misuse of AI can have catastrophic effects for multiple reasons, which is why the signatories of the letter ask that the current developments be paused for six months, to allow regulators to perform an analysis of risks and propose regulatory measures to dissipate them.

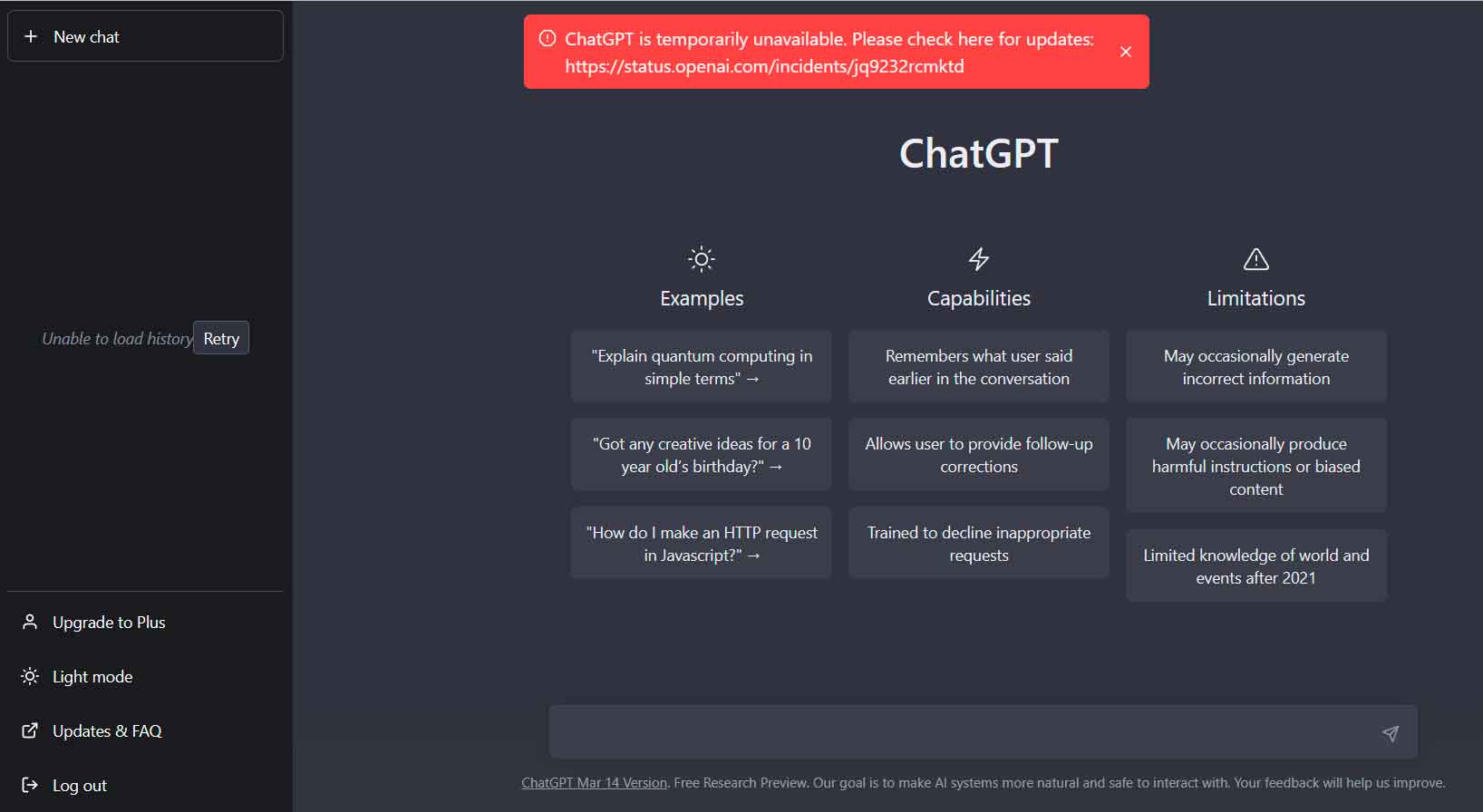

However, not all risks are of this type, there are also others that we have known about for a long time and that are not exclusive to artificial intelligence, and a clear example of this is that of the protection of user data. You may recall that OpenAI recently acknowledged a limited data leak from ChatGPT users. Well, this incident has already begun to have legal consequences for the company.

The GPDP (Guarantee for the Protection of Personal Data), the Italian personal data protection regulator has given an order to block access to ChatGPT in Italy, as a result of problems in the collection and management of data, as well as in the verification of the age of its users, as reported today in a press release. The measure is taken in response to last week’s incident, but is not exclusively focused on it.

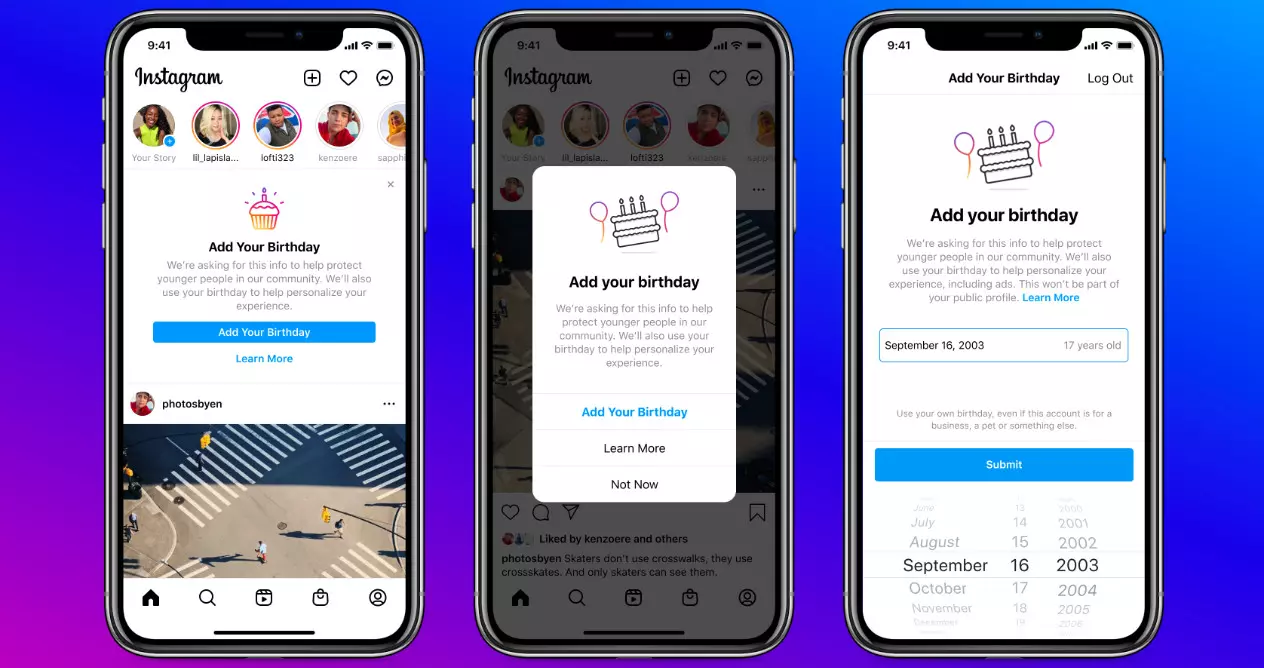

The ChatGPT block in Italy it’s temporarysince it is subject to OpenAI solving the problems identified by the Italian regulator, which states “the absence of a legal basis that justifies the collection and mass storage of personal data, for the purpose of “training” the algorithms that underlie the operation of the platform«, and also that «although -according to the terms published by OpenAI- the service is aimed at people over 13 years of age, the Authority points out that the absence of any filter to verify the age of users exposes minors to completely inappropriate responses«.

This movement is more important than it may seem at one point, since the actions of the GPDP are protected by compliance with the GDPR, the European standard for data protection, applicable throughout the common area of the EU. In other words, if it is determined that ChatGPT does not comply with the provisions of the law in Europe, we can expect similar movements from other regulators of the old continent.

As we can read in said document, OpenAI has already appointed a presenter for the European jurisdiction, and he You now have a 20-day period to communicate to the authorities the measures adopted by the company to solve the problems identified by the regular, and ends by reminding that, if they do not act in this sense, the creators of ChatGPT are exposed to a fine of up to 20 million euros or up to 4 % of annual global turnover.