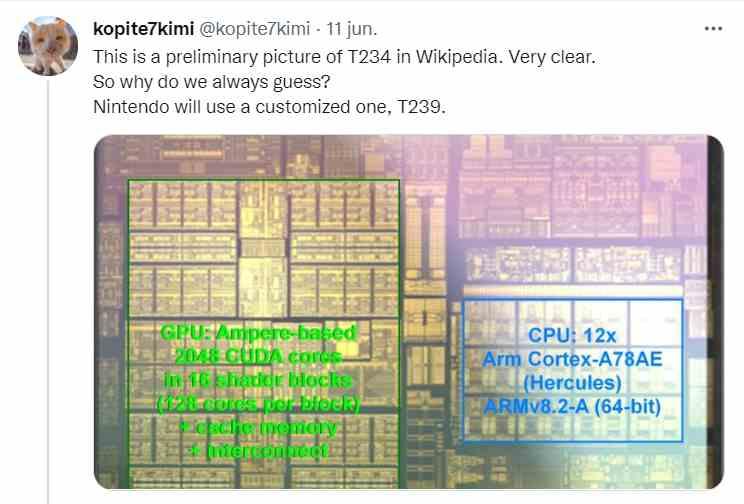

The little information that we could consider really reliable regarding the next Nintendo console so far was the one that the insider Kopite7kimi, who has already proven its reliability several times in everything related to NVIDIA, had dropped the following on his Twitter account a few months ago:

T234 is the model number of the Tegra Orin, so Nintendo will make use of a custom version of it known as T239, both will be manufactured under Samsung’s 8 nm node like the current RTX 30, but could make use of a GPU based on the Lovelace architecture of future RTX 40s.

It has to be clarified that Kopite does not claim that Lovelace will be the graphics architecture that NVIDIA will implement in the following Tegra. It would make sense that since the manufacturing node will be the same as the current RTX 30 that both the T234 and T239 make use of the architecture of the current GeForce Ampere.

NVIDIA and Nintendo will be together again on Switch 2

The appearance of a patent assigned to Nintendo under the name of SYSTEMS AND METHODS FOR MACHINE LEARNED IMAGE CONVERSION They come to confirm that the historic video game company based in Kyoto is going to have NVIDIA as a partner again for its next generation console and therefore a successor to its current Switch.

How can we ensure this? To begin with, the patent refers to the use of neural networks to increase the resolution and no Nintendo console has made use of units of this type in previous versions of the brand, and secondly by the fact that the text of the patent refers to to the NVIDIA Tensor Cores.

Let’s not forget that the SoC of the Nintendo Switch is the Tegra X1 with a GPU based on NVIDIA’s Maxwell architecture, which belongs two generations ago to Volta, which was the first to implement systolic arrays for the first time in a graphics architecture of Jensen Huang’s company. Regarding the process described in the patent, despite being a super-resolution system through deep learning as is the case with NVIDIA’s DLSS, the patent does not describe this technique, but rather a technology developed by Nintendo itself internally.

Before defining what we can expect from Switch 2, we must clarify that Nintendo has not officially confirmed that it has any hardware under development, despite the fact that the patent has been assigned to the company and its inventors are part of its well-known European department. like NERD, Nintendo Europe Research and Development, which is based in France.

Why has Nintendo published a neural network patent for Switch 2?

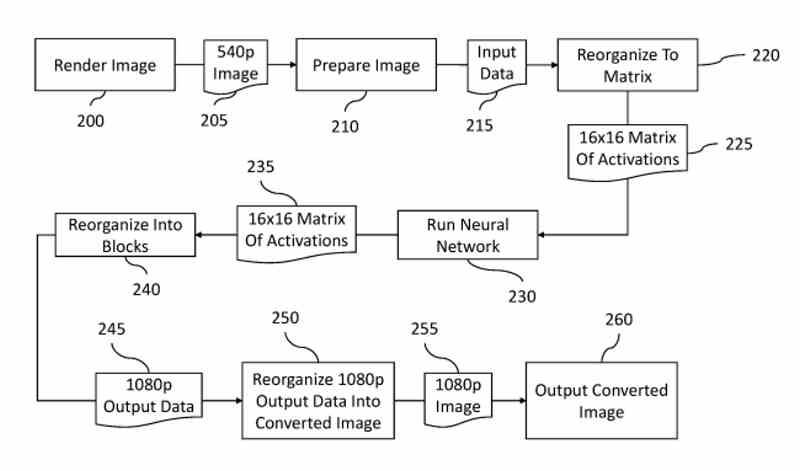

The patent describes a process of resolution scaling through Deep Learning taking as reference an image at 540p resolution to generate another at 1080p, which may surprise amid the intermittent rumors of a 4K Switch that have appeared in recent years But let’s not forget that the Nintendo console is primarily a handheld console in terms of hardware.

In recent times the problem of RAM bandwidth consumption in low-power devices has not been solved and we are faced with the paradox that GPUs in very low-power SoCs, despite having reached powers similar to those of PS4 and Xbox One, are accompanied by a RAM with a very low bandwidth that acts as a bottleneck. So it makes sense to reduce the amount of data that the GPU has to render internally so that slow transfer speeds are not an issue.

The safest thing is that Nintendo makes use of LPDDR5 or at most LPDDR5X memory in its next console and this means at most doubling the bandwidth compared to Switch, which has a power equivalent to PS3 and Xbox 360 and is expected to reach the power PS4 and Xbox One for the next generation. The problem is the memory, since the chosen one does not give them enough bandwidth.

This is how Nintendo’s neural network patent works to achieve more resolution

The first thing to bear in mind is that a patent of this type describes a process and what each of its elements does in an orderly manner to obtain a result for specific purposes. It is as if the patent were a cooking recipe in which the ingredients and the transformation processes of the same in the whole process were also individually described.

And what does the patent tell us? Let’s see this step by step:

- An image is generated at 960 x 540 pixels, although the patent says that other resolutions are possible, this is simply used as an example.

- The image buffer is organized into blocks of 4 x 4 pixels each.

- From each of these blocks another 8 x 8 pixel is generated, the value of the new pixels is obtained by applying a mathematical function with the neighboring pixels. Which is not described in the patent, but it can be bicubic interpolation, Lanczos or any similar technique.

- The next step is to generate a 16 x 16 matrix from the information of each RGB channel, the rest of the missing information is filled with zeros in the matrix.

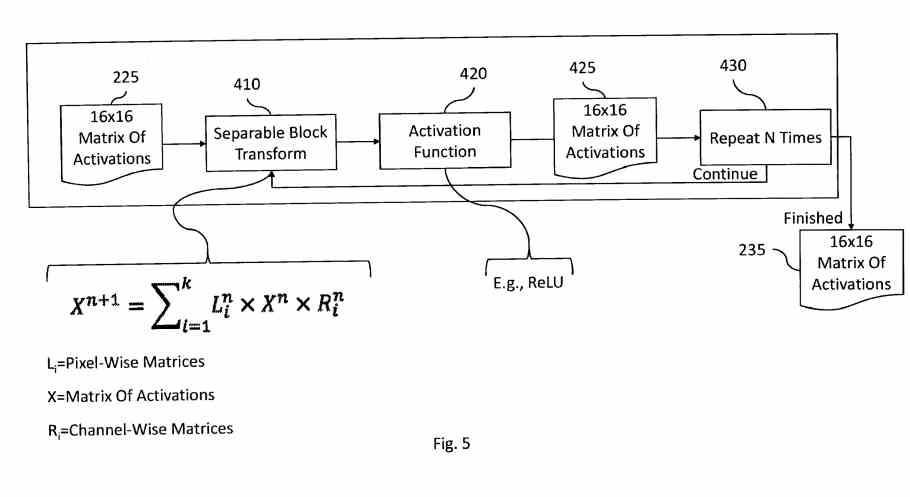

This 16 x 16 component matrix is the input data for the Nintendo patent neural network that will run on the Tensor Cores. As a subsection, it should be clarified that in the NVIDIA GPUs for PC these support 16-bit floating-point calculations, well, as a detail each of the ALUs that make up the systolic matrix support SIMD on register or SWAR and can execute 2 operations 8-bit per ALU and the total operations per subcore on NVIDIA GPU SMs is precisely 256 or 16 x 16.

The final step after having executed the inference algorithm will be the conversion of the generated information to a 1080p image. Remember that the objective of the inference process is none other than to generate an image at a higher resolution as if it had been generated natively by the GPU.

Automatic update for current Switch games?

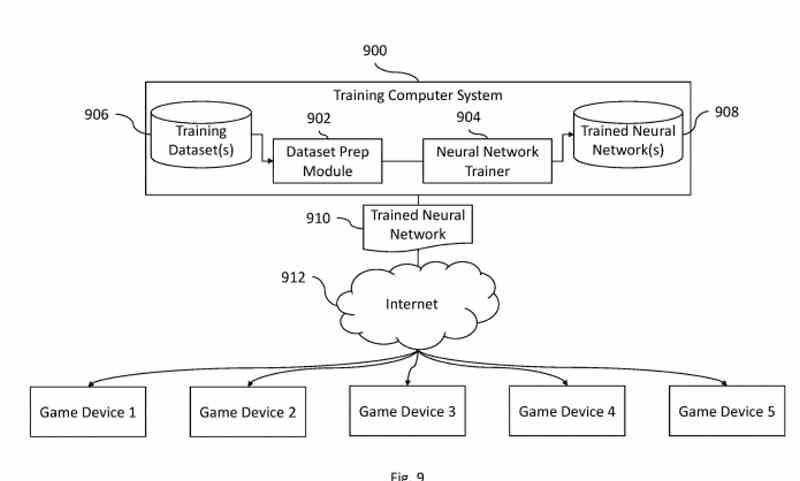

When describing the Nintendo patent an algorithm that runs on a neural network and therefore under artificial intelligence needs a training process to get the inference algorithm that will generate the image at a higher resolution.

The problem is that because each game has its own visual style, personalized training is necessary for each one of them. The patent makes it clear that the training will be done with data centers in the cloud that developers can use to generate the inference algorithms that they can use in their games. So from the outset, games will not be able to make use of this rescaling technique directly if they are not prepared, but they will be able to take advantage of it through specific updates.

To finish, the patent refers to the use of temporal information as occurs in NVIDIA DLSS 2.0 or Intel XeSS, such as movement vectors from deriving the position of each object in several frames to obtain its speed, which means the use of data with associated temporality. In fact, they make it clear to us that the resolution upload process does not work at the system level but for each game and therefore each of them will have to implement it individually.