The green giant is working on a version of the NVIDIA A100 that will be equipped with a liquid cooling system, and we have already seen the first images. At the moment it seems that the configuration with said cooling system will be limited to the PCIe model.

The NVIDIA A100 is a high-performance graphics accelerator that until Hopper’s presentation remained as the most powerful of NVIDIA in the sector. Liquid cooling systems have been gaining increasing popularity in the world of data centers, mainly due to the significant increase in power that servers have experienced and the growing heat dissipation needs that this has produced.

As many of our readers will know the immersion cooling It is being one of the great bets of the professional sector, but this requires a significant investment that not all companies can, or want, to assume. Localized and centralized liquid cooling in specific components, that is, in those that really need it, is a cheaper and easier to implement option, and that is why components like this new NVIDIA A100 continue to make a lot of sense.

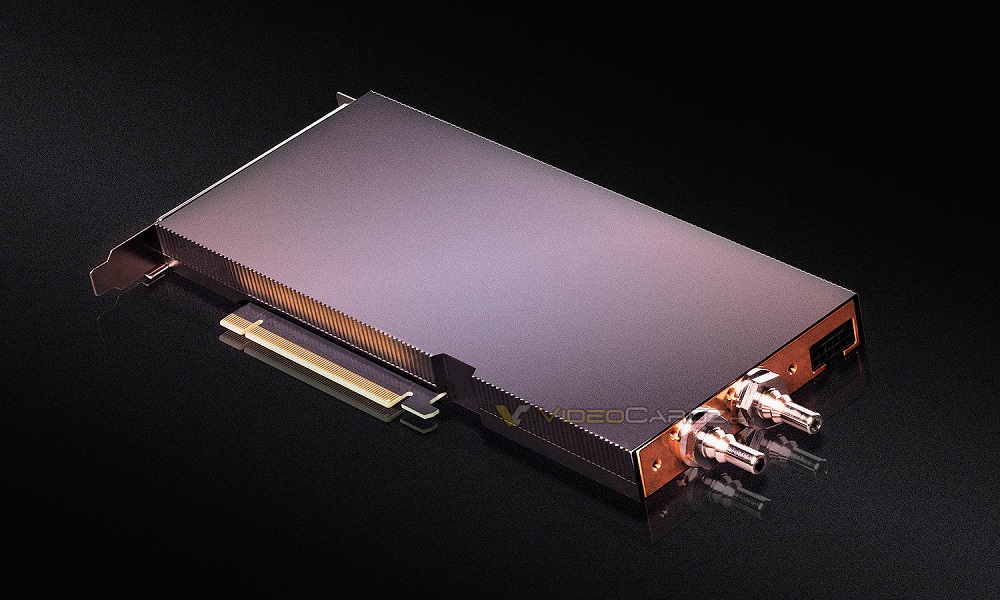

At the design level, we can see that the new NVIDIA A100 with liquid cooling has a very thin finish (it only occupies one expansion slot), it has two connectors for the tubes that will connect it to a liquid cooling system and it uses a single connector additional 8-pin power supply.

It seems that the block is very compact, it should be finished in copper and make contact with all key components of this graphics accelerator. There were already kits that allowed this type of cooling to be mounted on an NVIDIA A100, but its installation required a bit of risky work on the part of the user. In the attached image we can indeed see a custom NVIDIA A100 with a liquid cooling block.

The NVIDIA A100 based on liquid cooling saves us that part, and prevents us from getting a scare during the process of disassembling the cooling system that comes with the reference model. We don’t have details on its possible sale price, but we imagine that the specifications of this new model will be identical to those of the reference, which means that it will have 6,192 shaders, a 5,120-bit bus, 80 GB of HBME memory and 432 tensor cores. for AI, inference and deep learning.