Since G-SYNC technology came to the fore, a key aspect of every monitor that used one of its modules has always been criticized: the premium. FreeSync arrived like the competition, but it really is not such, nor has it been, nor does it even pretend, since it does not use physical hardware and is all software synchronization like G-SYNC Compatible, and therefore there is no price increase, but do you know how much a G-SYNC module? You will hallucinate.

If you are one of those users who complains about the price of more than G-SYNC monitors, after this article you may think that it is even a bargain. We are not going to logically enter into comparisons of what a FreeSync monitor is worth vs one with G-SYNC or G-SYNC Compatible, it is nonsense mainly because they do not compete for the same user or for the same gaming experience, but as a result of a little research at Igor’s Lab now we know a little more about what NVIDIA does with such physical modules and chips.

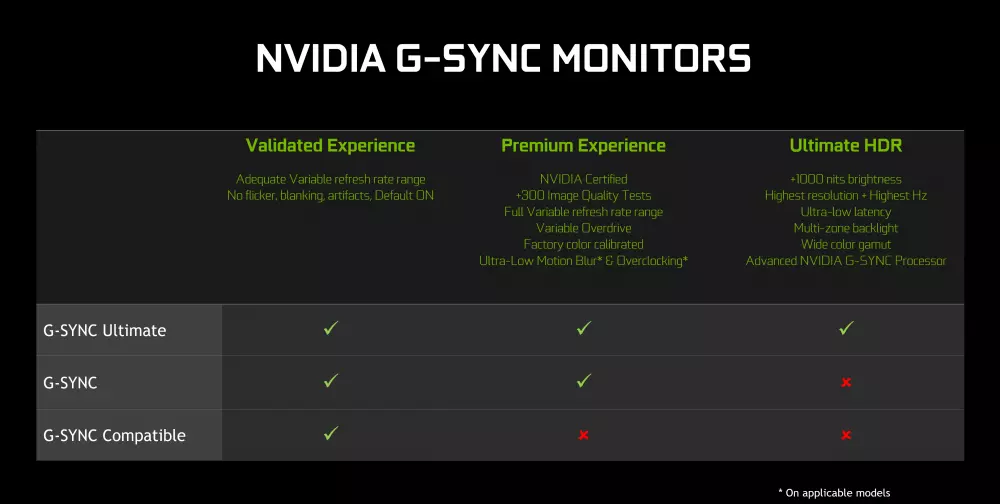

G-SYNC vs G-SYNC Ultimate, different modules

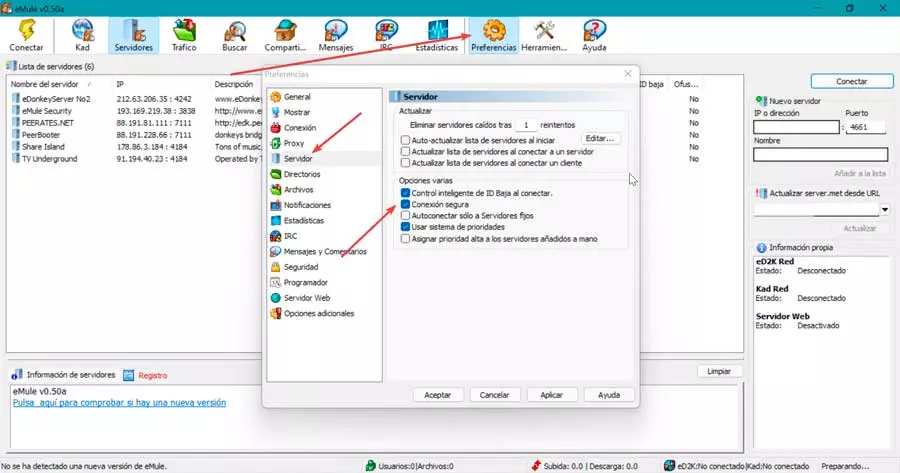

It may seem obvious as such, but the reality is that as a general rule and especially if the monitors to be compared are very different in size and performance, NVIDIA uses different G-SYNC modules by testing a series of qualities for the panel.

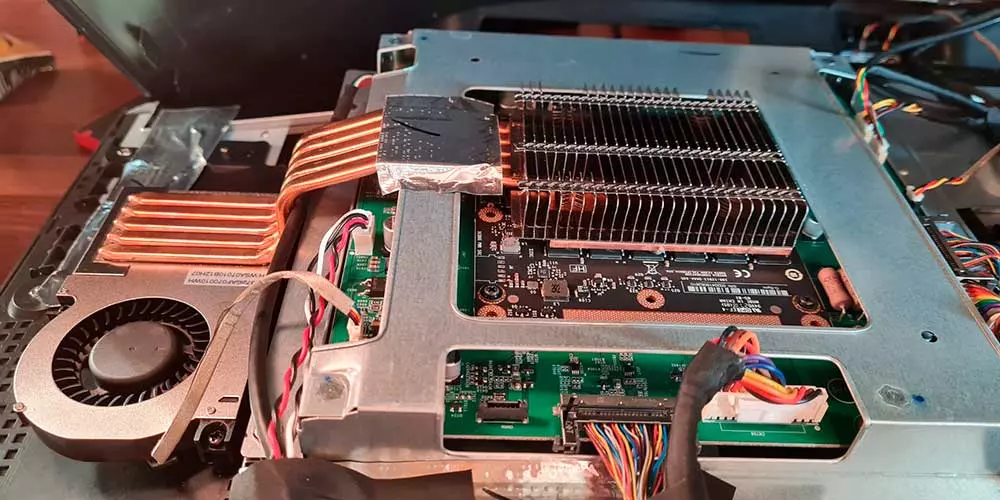

The one that we are going to discuss here belongs to the best of the brand, G-SYNC Ultimate, and the monitor to be treated has been a AOC Agon AG353UCG, which is between 1800 euros and 2300 euros depending on the country and the store where you look. In this type of monitor and in many others there is a very serious problem with the NVIDIA module, and that is that it gets very hot. For further guidance you can visit Monitors Hub.

For this reason, many manufacturers have had to resort to active dissipation through a small fan, but not before including a cooling system that could well be used for some low-end CPUs. What we can find behind this heatsink with 6 (which is said soon) is an MXM card with a chip Altera Arria 10AX048H2F34E1HG-ND attached to two VRAM modules signed by Micron (in most monitors).

This card is really an FPGA that arrives signed by Intel and that curiously is manufactured by TSMC at 20 nm, boasting a voltage of between 0.8V and 0.9V to operate and with a working temperature of between 0 to 100 ºC. However, its consumption is not specified.

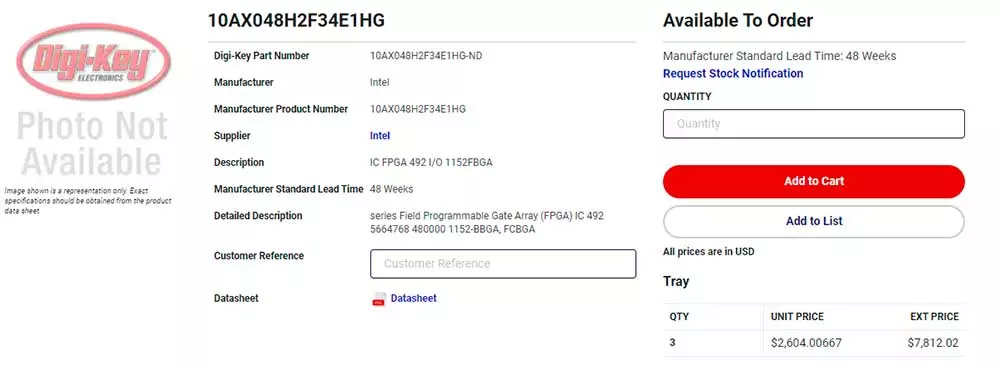

A crazy price for the G-SYNC module

If we look for this chip in any list of the main vendors of components for the industry we will see something really surprising: $ 2,604 if we decide to buy only one. Assuming that NVIDIA buys literally millions of units, how much can the price eventually drop? We do not know and surely we will never have that information, but if we take into account that it is currently difficult to see a monitor with a G-SYNC module for less than 800 euros… What is happening?

How can NVIDIA offer manufacturers an MXM card with its module at an interesting and low price? If it was not possible to lower the price much (very much in fact), who assumes the cost of selling a monitor at a price almost of what the chip itself is worth?

And of course, seeing the price and assuming a brutal discount for the purchase of millions of units, why doesn’t NVIDIA design its own FPGA chip? Could the purchase of ARM have something to do with this too? Unanswered questions that if events occur we may, perhaps, know them.

In any case, we may not find G-SYNC monitors so expensive after all.