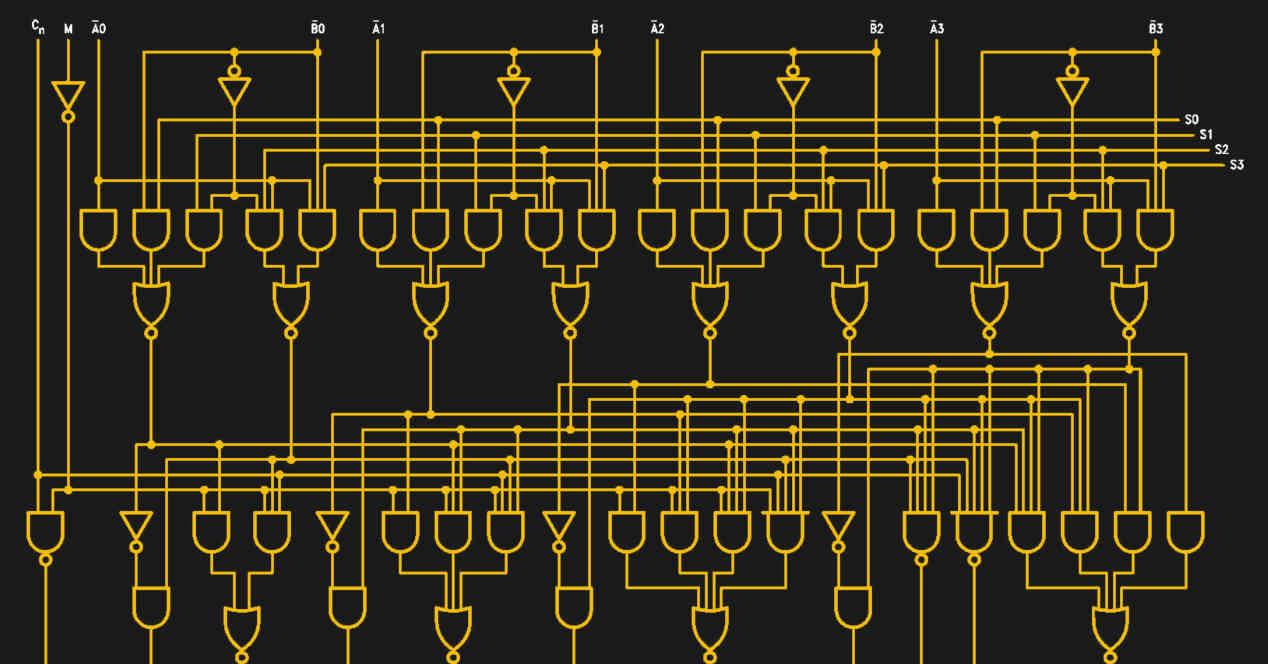

The first ALU to be released was not part of a CPU, but rather a 7400 series chip with a TTL interface from Texas Instruments, the 74181 was the first ALU integrated on a single chip. It was only 4-bit and was used in various minicomputers during the 1960s, marking the first great transition in computing.

The construction of the first complete CPUs during the 70s and with all the corresponding elements to execute a complete instruction cycle obviously had to count on the integration of the ALUs to calculate the logic and arithmetic instructions inside the chip.

Types of ALU

We can divide the ALUs into two different subdivisions, the first one is by the type of number to be calculated and therefore if it is operated with integers or with floating point, where in the latter case we are talking about operating with decimals. Floating point operations follow a rule that indicates how many bits of the number correspond to the integer part and how many to the fractional part.

The standards in both cases also indicate whether the first number marks the sign or not, for example a number in 8-bit integers can represent a figure from 0 to 255 or from -127 to 127 depending on the format used.

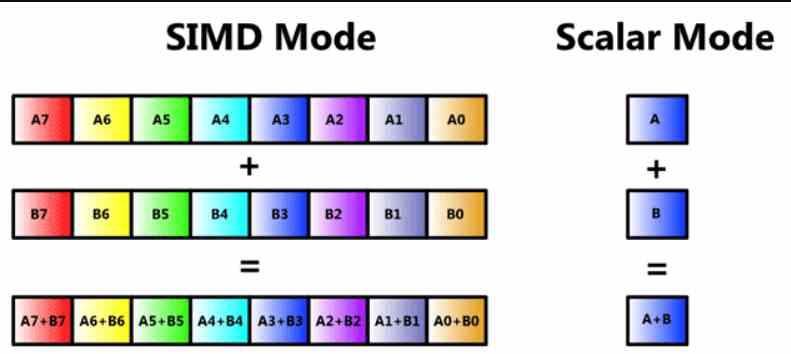

The second categorization refers to how many data and instructions an ALU executes at the same time. The simplest form being the scalar ALU where an operation or instruction is performed by operand. We also have the SIMD or vector units, which perform the same instruction with different operands at the same time.

Types of operations with an ALU

First of all, we have to have an ALU that cannot work by itself, so a control unit will be necessary to mark which instruction to carry out and on what data to do it. So in this explanation we are going to suppose that we have a control unit accompanying our ALU.

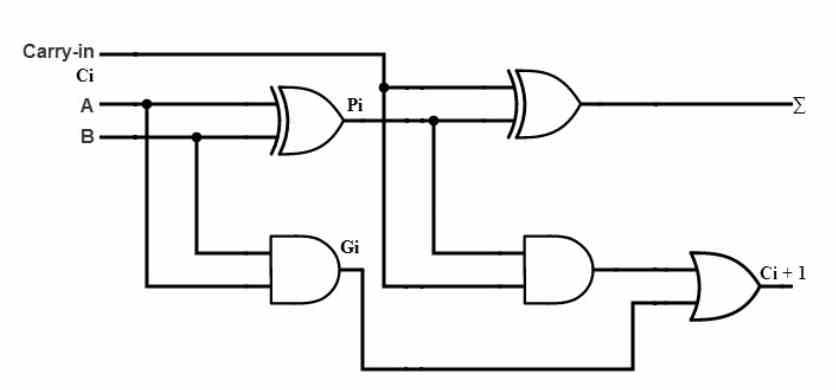

An ALU which allows any type of processor, be it a CPU or a GPU, to perform mathematical operations with binary numbers. So it is nothing more than a binary calculator, being the simplest type of ALU the one that allows adding two numbers of 1 bit each, which is an operation that would be as follows:

| Operation | Outcome | Haulage |

|---|---|---|

| 0 + 0 | 0 | 0 |

| 0 + 1 | 1 | 0 |

| 1 + 0 | 1 | 0 |

| 1 + 1 | 1 | 1 |

If you look at these, these are the specifications of a logic gate of the OR type, but we find a problem that is to carry when adding 1 + 1, since the result of adding binary 1 + 1 is 10 and not 1. So We have to take into account that 1 in the carry we carry and therefore a simple OR gate is not enough, especially if we want to work with a much higher precision in bits and therefore have a much more complex ALU in number of bits.

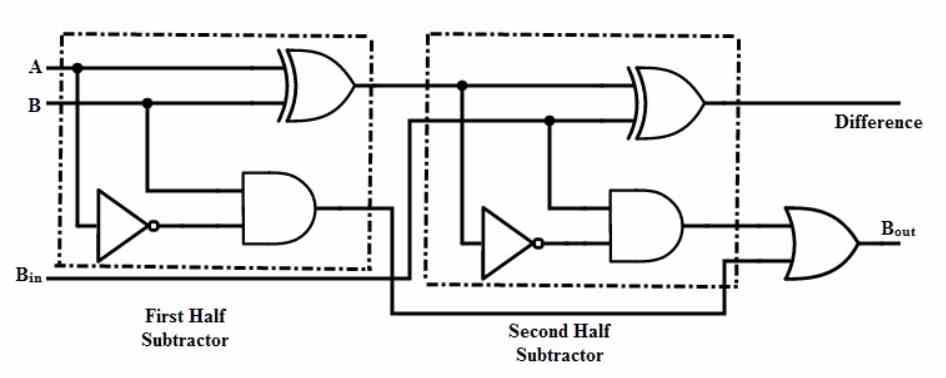

Binary subtraction in an ALU

Subtraction or subtraction can be derived with the following formula:

A – B = A + NOT (B) +1

The trick here is very simple, it is based on the fact that we are working with binary integers. This does not work with floating point numbers. We can use the same mechanism that is used to add two numbers to perform the subtraction operation. All we need is to invert the value of the second operating through a series of NOT gates and add 1 to the final result. Thanks to them we can use the same hardware to perform an addition operation to perform a subtraction.

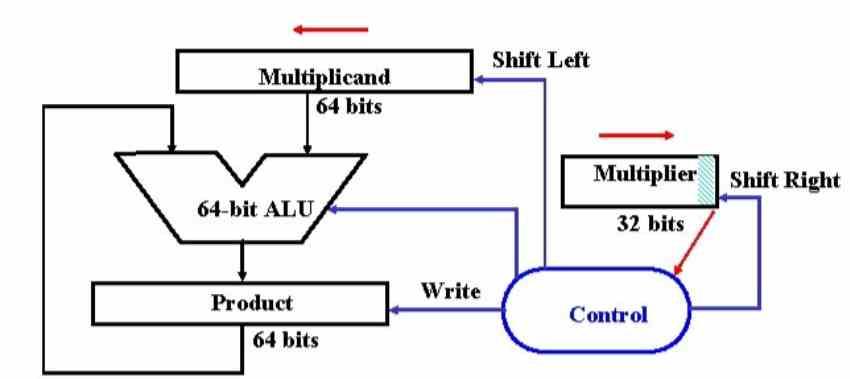

Binary multiplication and division in powers of 2

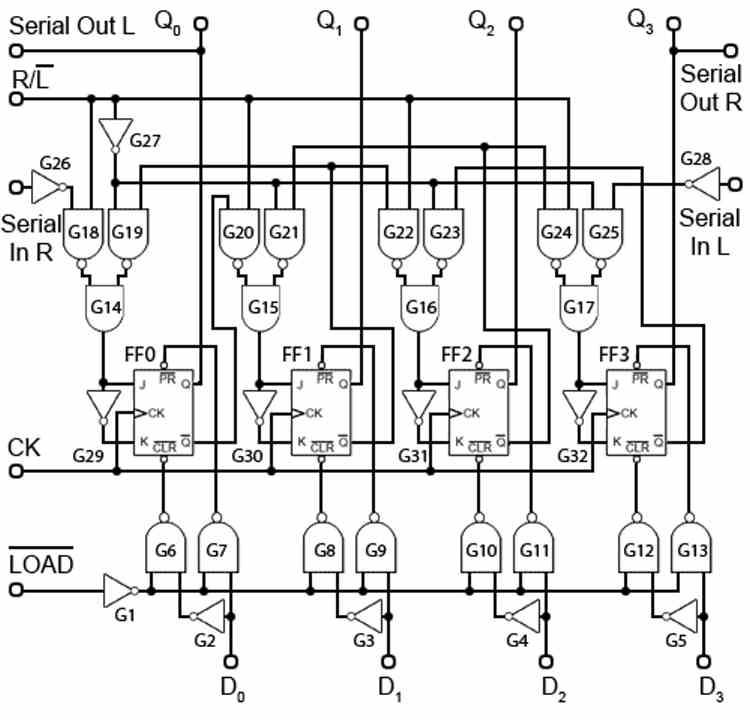

The simplest form of multiplication in a binary system is multiplication by numbers multiples of 2, being a binary system, we only have to implement a mechanism where the input data is shifted several positions to the left, if we are multiplying, or to the left. right, if we are dividing. The number of positions? It depends on the power index of 2 of the multiplier, so if we are multiplying by 8 which is 2 ^ 3 then we will have to shift the number 3 positions to the left and if it is dividing 3 positions to the right. It is for this reason that ALUs also integrate bit shift operations, which are the basis for multiplying or dividing by multiples of 2.

But if we talk about multiplying other types of numbers, it is best to go back to when we were little in school.

Multiplication with non-power of 2 numbers

For many years the ALUs were very simple and could only add, since they did not have ALUs intended for multiplication. How did they perform then? Well, executing several concatenated sums which took them many cycles. As a historical curiosity, one of the first domestic CPUs to have a multiplication unit was the Intel 8086.

Suppose we want to multiply 25 x 25, when we were little what we did was the following:

- First we multiply 25 x 5 and write down the result, which is 125.

- Second we multiply 25 x 2, which gives us 50 and we write down the result but shifting one position to the left.

- We add both numbers, since we have the second number shifted to the left, the result of the sum is not 175, but 625, which is the result of multiplying 25 x 25 in decimal.

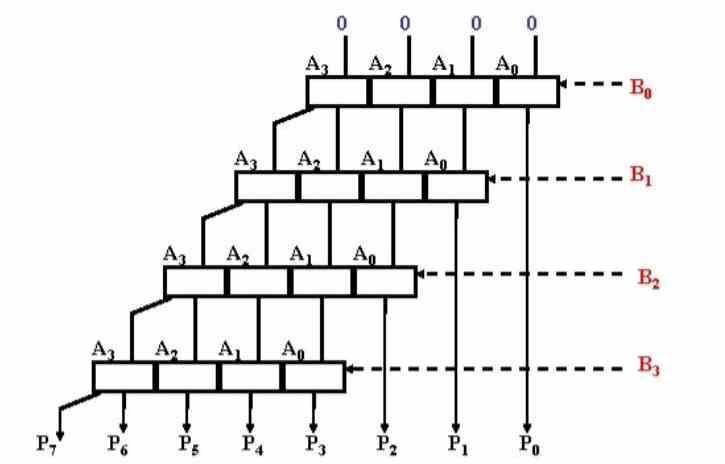

Well, in binary the process is the same, but the number 25 in this case is 11001 and therefore a 5-bit number. So binaryly we are going to multiply 11001 x 11001 and for this we are going to have to use AND gates.

- First, we multiply 11001 x 1 = 11001

- Second, we multiply 11001 x 0 = 0000, we write the result one place to the left.

- Third, we multiply 11001 x 0 = 0000, we write the result two places to the left.

- Fourth, we multiply 11001 x 1 = 11001, we write the result three places to the left

- Fifth, we multiply 11001 x 1 = 11001, we write the result four places to the left

- Taking into account the position of each operation we add the result, which should not give as a result 01001110001

More complex math operations

With the above explained you can build units to perform much more complex mathematical operations such as divisions, square roots, power, and so on. The more complex the operation, the more transistors are obviously going to be needed. Actually, for each operation there is a different mechanism and when the control unit tells an ALU the type of operation to execute then what it is doing is telling it that it has to use that specific mechanism for that specific mathematical operation.

As the important thing is to save on transistors, the most complex operations are defined as a succession of the simplest in order to reuse the hardware. This leads to those more complex operations requiring a higher number of clock cycles. Although in some designs complete mechanisms are implemented that allow these operations to be carried out in a much smaller number of cycles and even in a single cycle in many cases, but they are not common in CPUs.

Where they are used in GPUs, where we see a type of unit called Special Function Unit that is responsible for executing what we call transcendental operations such as the trigonometric ratios that are used in geometry.

Where does the ALU get the data to operate?

In the first place, we must bear in mind that an ALU does not operate with the data in memory, but that in the process of capturing and decoding the data with which it has to work is stored in a register called accumulator, on which the operations.

In some more complex systems, more than one register is used for arithmetic operations and in some cases even special registers for some instructions. Which are documented most of the time, but in other cases, because they are used in only certain instructions, they are not usually documented.

The reason for using the registers is because of their proximity to the ALU, if the RAM memory will be used then it would take much longer to perform a simple operation. The other reason is because much more energy would be consumed to perform an operation.

With all this it is explained how an ALU works, at least in basic terms.