As we increase the output resolution in our games, the number of pixels increases, with it the bandwidth and the number of operations per second required. GPUs face bottlenecks in their design, which are not only bandwidth, but also the size of these and the increasing power consumption due to it.

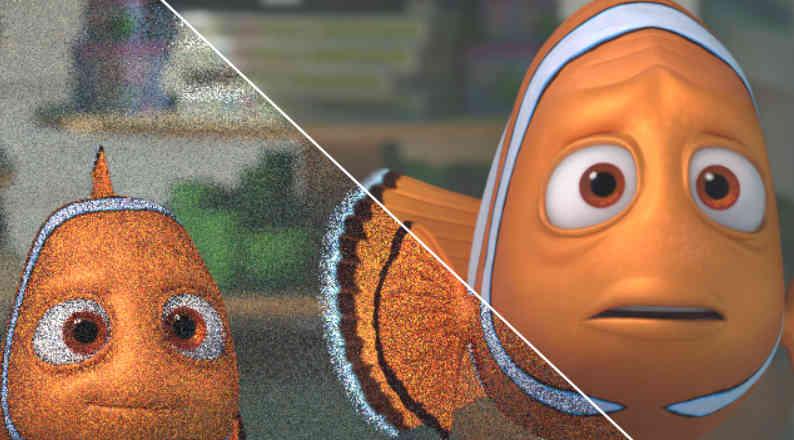

The first to realize this was NVIDIA, hence the development of its Tensor Cores and algorithms such as DLSS. The idea is none other than to render at a much lower resolution and after each frame produced through algorithms based on computer vision, produce a version with a higher output resolution and therefore with a greater number of pixels. Where the predictions of a well-trained AI can draw an image extremely close to objective resolution.

AMD on the other hand has been forced to create the FSR, thought to the limitations in the face of the AI of its processors and devised to cut the advantage that the DLSS gives to NVIDIA in certain games.

The future evolution of NVIDIA DLSS

We are very clear that in Lovelace, NVIDIA is going to greatly increase the computational capacity of its Tensor Cores, which will accelerate algorithms such as DLSS, at the same time that it will allow to launch more complex versions of the algorithm that will increase the image quality with respect to the versions current DLSS.

But one thing that caught our attention at NVIDIA’s own GTC 2021 is the creation of virtual environments for self-driving. Many of you may wonder what this has to do with DLSS, but we must bear in mind that we are dealing with what we call computer vision, where we teach the AI system to differentiate basic shapes and colors so that it can then make an identification or a reconstruction of the same image but at a higher resolution.

One of NVIDIA’s goals is to minimize the need to train the AI of different games, which will expand the number of games compatible with DLSS, but also to solve the problem of image inaccuracy with Ray Tracing. Especially because the next step will be the application of Denoising algorithms through AI, which can conflict with the results of the DLSS, hence DLSS 3.0 will be a union of both algorithms.

And what will the AMD FSR look like?

The following evolution of the AMD FSR is clearer than that of the DLSS, let’s not forget that the version of the AMD algorithm is not based on artificial intelligence, however, we have clues that suggest that AMD could launch a version 2.0 that does it would be based on AI.

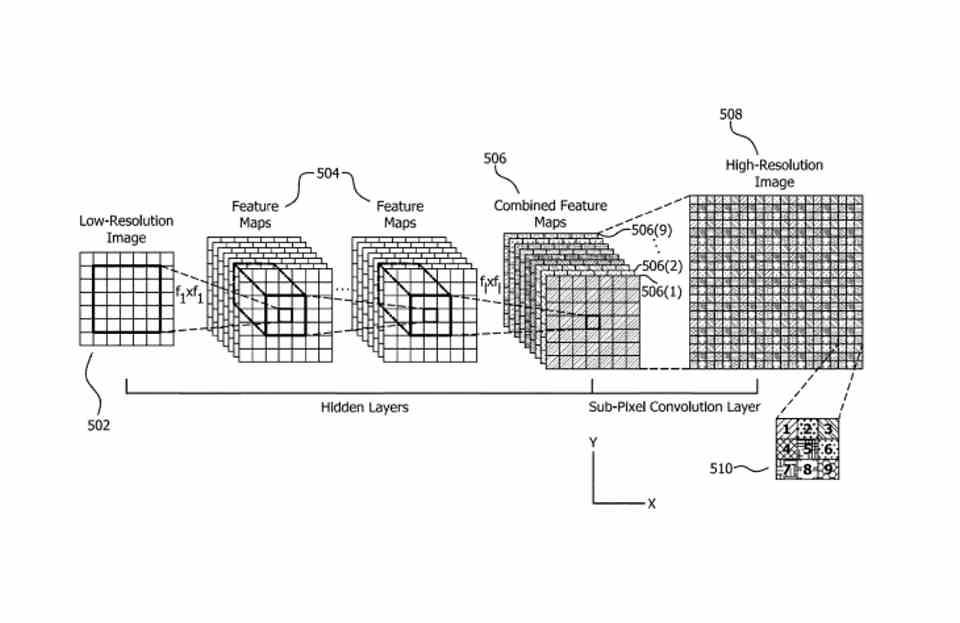

The first of these is the existence of a super resolution patent by AMD that makes use of neural networks, which indicates the addition of this type of hardware in future AMD GPUs of the RDNA family. And therefore it would be the first time that AMD would add this type of units in its Gaming GPUs.

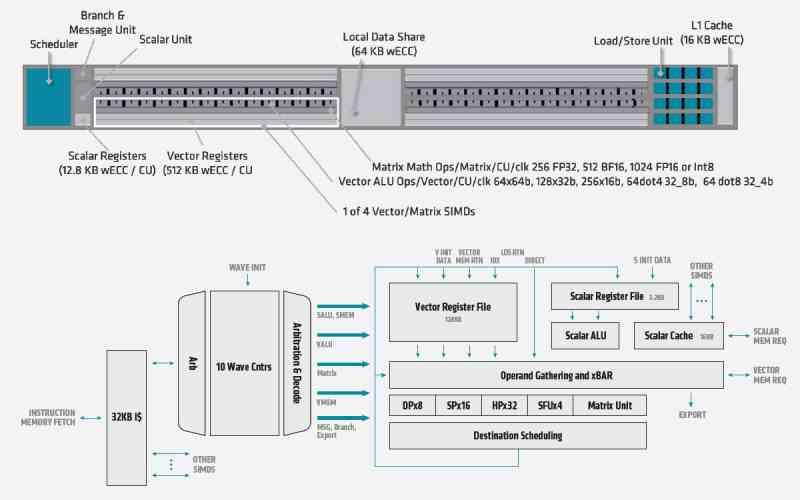

Which would not be a problem for them since they already have a unit of this type in CDNA, so they would only have to move this unit from the Compute Units of CDNA to those of RDNA. In any case, the biggest challenge for AMD is to catch up with NVIDIA, not only in hardware, but also in software, let’s not forget that DLSS is an algorithm in constant evolution and it is not something fixed.

So the evolution of DLSS and FSR are clear, what we do not know is if AMD will put the batteries and will catch up with the DLSS at the level of algorithm quality or both will fall into oblivion by the algorithm that Microsoft is developing. and that will include in future versions of DirectX.