The AMD FSR has revolutionized the GPU landscape in recent weeks, not only by rivaling NVIDIA’s DLSS in delivering higher resolution images using fewer resources. If not also due to its open nature, which allows it to be implemented in any GPU regardless of its architecture.

What are Super Resolution algorithms?

Super-resolution algorithms have become very popular in recent times, especially those using convolutional neural networks like NVIDIA’s DLSS. The particularity of the AMD solution? The fact that it is not based on artificial intelligence and therefore does not need to be trained with a set of previous images of the same game. Since a convolutional neural network for computer vision what it does is learn a series of common patterns to perform a reconstruction.

The advantage that the method used by the AMD FidelityFX Super Resolution has over the DLSS when it comes to being implemented in any game is that the AI does not have a tendency when generating the new images. To understand the concept, what we have to do is imagine that we train an AI with a set of images with an artifact or image error in common. Then we make the AI reproduce those images or variants thereof. How there is the tendency learned during the AI learning phase to learn these image errors will reproduce them with said image error.

This causes that in a super resolution system based on AI you can reach conclusions that are not exact. That’s why games under NVIDIA’s DLSS come out in a controlled and dropper fashion, while FidelityFX Super Resolution is an algorithm that can be applied to any game.

The FidelityFX Super Resolution is a spatial and not a temporal algorithm

The method chosen by AMD is based on taking the information from the current frame and only the current frame, so it differs from other methods of image resolution scaling such as Checkerboard rendering.When we talk about temporality, we are referring to that to generate the higher resolution version of the current frame it proceeds in part from the previous frame. So it lacks what we call temporality and takes the frame information at a lower resolution than the GPU has just generated to create the higher resolution version of the image.

But what do we mean by resolution? Well, to the number of pixels that compose it, so when we increase the resolution of an image what we do is increase the amount of these, with this new pixels are generated that occupy the space, but whose value in color we do not know. The simplest solution? Use interpolation algorithms, which are based on painting the missing pixels with colors that are halfway to the neighboring pixels. The more neighboring pixels you take as source information, the more accurate the information will be.

The problem is that the raw interpolation is not good enough and is not used, the quality of the resulting images is very low and often differs from reality. Today most image editing applications make use of artificial intelligence algorithms to generate versions at higher resolutions. If we already focus exclusively on the FidelityFX Super Resolution, its method of obtaining the information of the missing pixels is not based on a direct interpolation, but is more complex.

This increases the resolution of the AMD FidelityFX Super Resolution

We are going to stick to the official explanation that AMD has given, which we are going to quote below:

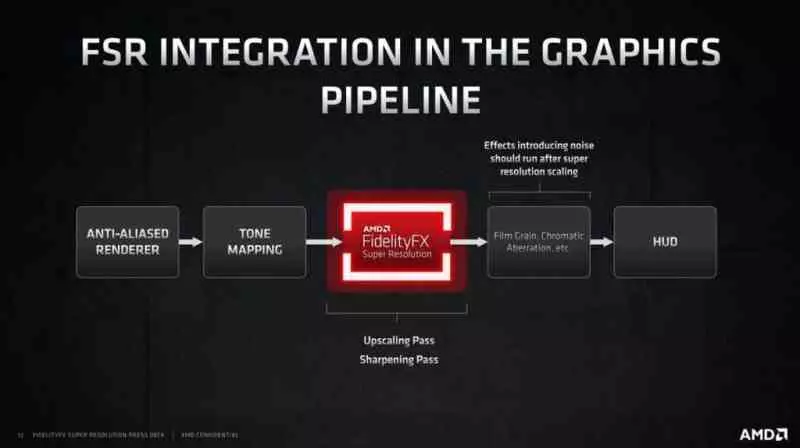

The FidelityFX Super Resolution is made up of two main steps.

Which means that they are two consecutive algorithms, which are executed one after the other or rather, where the second takes the information generated by the first. Let’s see what each of these steps are.

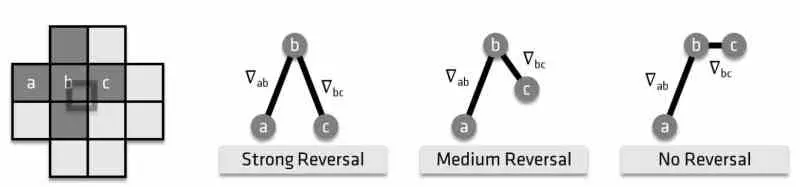

A scaling pass called EASU (Edge Adaptive Spatial Up Sampling), which also performs edge reconstruction. In this step, the input frame is analyzed and the main part of the algorithm detects gradient inversions, essentially looking at how neighboring gradients differ from a set of input pixels. The intensity of the gradient inversions defines the weights that will be applied to the reconstructed pixels at the screen resolution.

To understand the quote, the first thing we have to know is what the explanation with edge detection in an image refers to. To do this, what is done is a black and white version of the final frame, which is in RGB format. So it is done is to add the values of each of the channels and divide by 3 to obtain the value in a black and white image. If we leave in the grayscale image only the purely white, FFFFFF, or purely black 00000 values, then we will get an image that will delimit the edges.

In the AMD FidelityFX Super Resolution, it performs the image generated through edge detection at an output resolution much higher than the one that was originally rendered, but which corresponds to the output resolution that you want to achieve. All this will be combined with an image buffer that stores the gradient changes of each of the pixels. Which measures the changes in color intensity between pixels. This information is combined with the classic interpolation to obtain the image at a higher resolution.

A sharpening step called RCAS (Robust Contrast Adaptive Sharpening) that extracts the detail of the pixels in the enhanced image.

The image generated in the first step is enhanced by a modified version of Contrast Adaptive Sharpening, the end result is an image halfway between pure and hard interpolation and that of artificial intelligence.