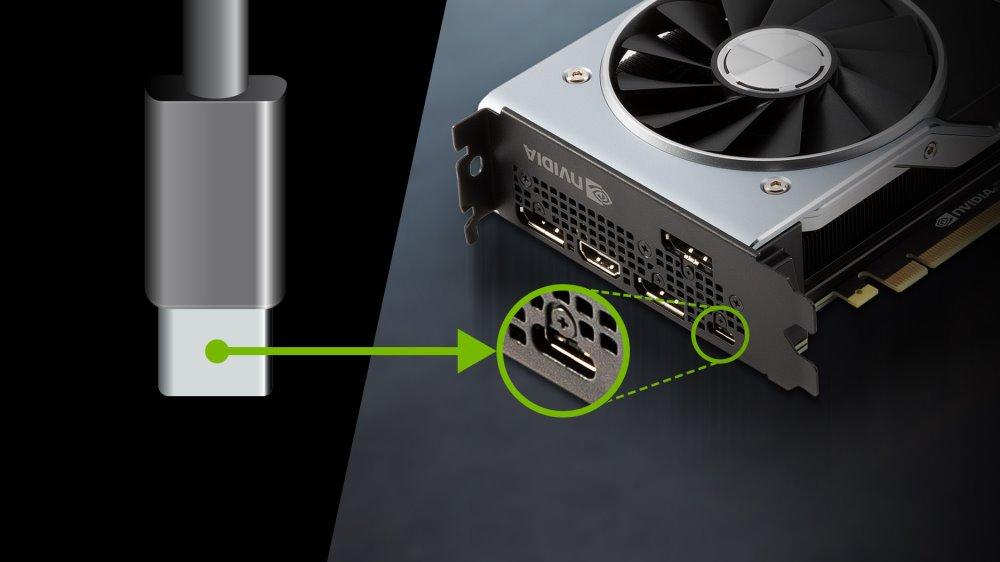

In stores we have a large number of monitors that use a USB type C port among their video inputs. The catch is that they use the high bandwidth of said peripheral interface to transmit video at high speed. With the added advantage of this connector by having a USB 2.0 type parallel data channel and above all by the ability to power the device directly without an additional power supply. However, we ran into a problem and that is that a good part of the graphics cards that are sold do not have any output of this type, limiting their use to laptops.

Why is USB-C as a video output hardly used?

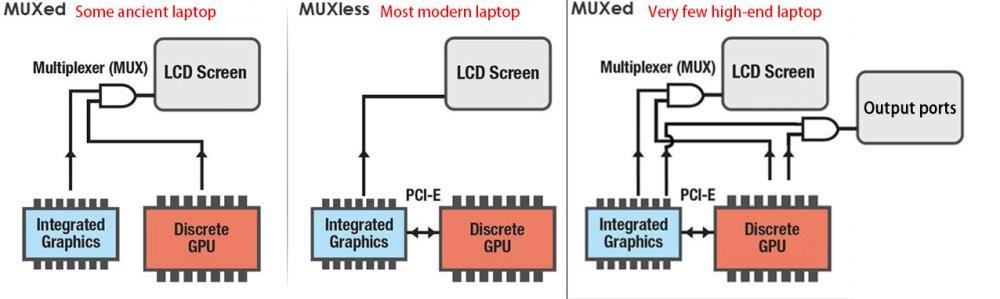

There is a technical reason behind all of this and it has to do with the video signal used as the basis for sending the video signal. What is done is to choose one or the other and a converter chip is usually chosen to transfer the signal to the other type. That is, from HDMI to DisplayPort or vice versa, but never the other way around. In laptops, since the connection of the screens of these is eDP, the DisplayPort is used with a converter to HDMI. On desktop it is the other way around and incidentally we will take the opportunity to explain a technical curiosity, the refresh rates and the video broadcast standards.

- HDMI supports refresh rates that are multiples of 60 Hz exclusively.

- The DisplayPort has greater versatility in that regard, also supporting multiple 72 Hz and even 90 Hz.

You will have noticed that many monitors with DisplayPort have much higher refresh rates on video inputs of this type than HDMI. Well, the fact is that if the GPU display controller is of the DisplayPort type then it will be easy to place a USB-C as a video output. Hence, they proliferate in laptops more than anything, since they are necessary for their integrated screen.

the real motive

Graphics cards for desktop computers are powered by the PCI Express port itself and the additional connectors, although a graphics card does not use all the power that the ports offer, the reality is that graphics card manufacturers prefer not to have to feed a external monitor via USB-C to give more power to the GPU and video memory and get better results in games compared to the competition.

That is, when you have two models that are exactly the same in terms of GPU and memory configuration. It’s the small points like better cooling and/or a higher clock speed, even by a few tens of MHz, that make the difference between your model and the competition and that and nothing else is what has affected adoption

A potential change for the future

If we take a laptop with a dedicated GPU, that is, it has a graphics chip and its VRAM on the board, we will see that even if the CPU has integrated graphics inside, both components work in a switched way and this allows them to share the space of the screen controller, which means that we do not have video outputs for the graphics card and for the motherboard, after all, in this type of computer we only have one PCB.

Ideally, in tower computers, something similar would happen, that the graphics card would not have video outputs, but instead the signal would be transmitted through the PCI Express port to the motherboard, which would allow the motherboard’s ports to be used or added additional ports within the limit accepted by the GPU. We could even add one or more USB-C ports as a video output to connect several screens of this type or one or more virtual reality viewers.

If you want to know a reason why graphics cards could usefully adopt PCI Express 5.0 in the future, this is one of them. Excess bandwidth can be directed in that direction. Which will give versatility in configurations and prevent so many unused duplicate ports. What’s more, facing VR we would like to see a USB-C as a video output on the front of the tower and not on the back, mostly for convenience.