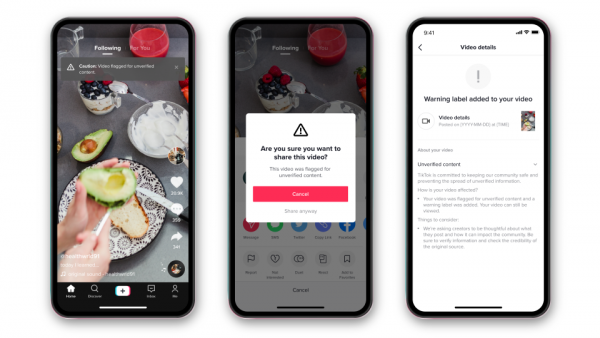

TikTok is introducing a new feature to combat unverified information on its platform . The app will start warning users before they share videos with unverified information. The update is intended to address a kind of gray area in the fact-checking process – claims that fact-checkers cannot verify.

With the update , users trying to share a video that has been marked as unconfirmed by the application’s fact-checkers will see a window Popup that says “this video has been flagged for unverified content.” They can still go ahead and share it if they want, but the video won’t appear on other users’ For You page. TikTok will also notify the person who originally shared the video that their post has been flagged.

Does TikTok’s unverified info feature really work?

TikTok hopes the message will encourage “a pause for people to consider your next move before choosing to ‘cancel’ or ‘share anyway.’ The company says that early tests of the warnings have reduced the exchange by 24 percent . The additional friction is similar to the Twitter experiment; This encourages users to read articles before sharing (a test the company says has been successful).

Somehow, TikTok has taken a more aggressive approach towards disinformation than other social media platforms. The company works with various third-party fact-checking organizations and removes videos they debunk. But some posts are likely to leak and the company has been forced at times to catch up. How? Like after the elections and the violence in Washington DC. For its part, TikTok notes that TikTok’s new unverified information feature should help you better address content that arises “during ongoing events.”

🙂