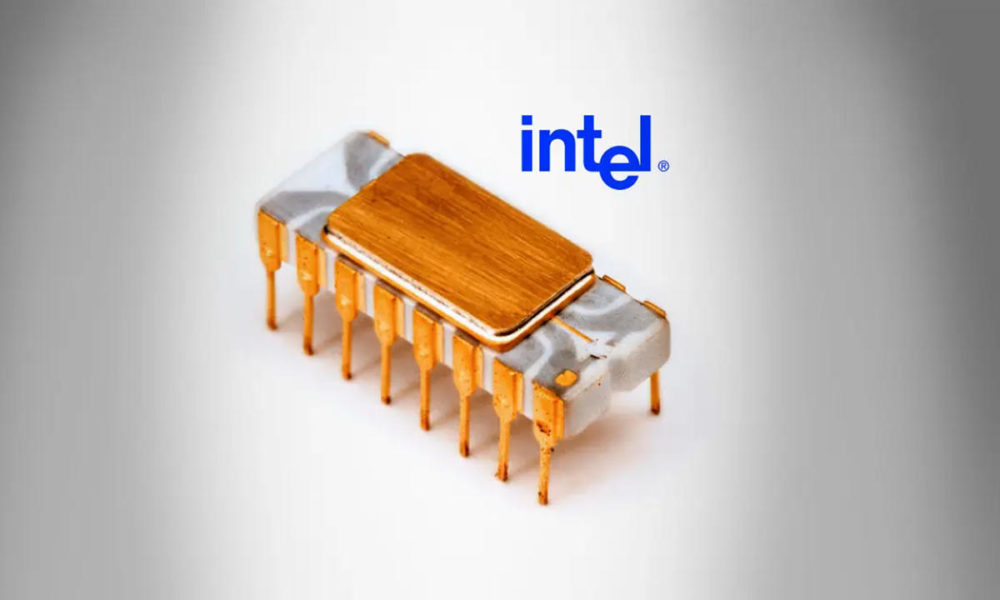

Today marks 50 years since the announcement of the Intel 4004, the first commercial single-chip microprocessor of the history of computing and a sample of the possibilities of Moore’s Law with which Intel would dominate the market for the next decades.

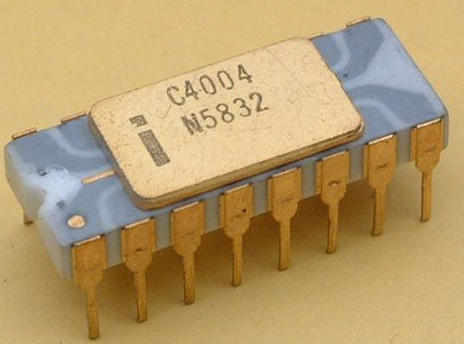

The Intel 4004 was a 4-bit CPU that reached a maximum clock frequency of 740 KHz. It was embedded in a 16-pin package and had 2,300 transistors. Its schematics and auxiliary circuits (chipset) were rescued a few years ago and can be publicly reviewed.

The microprocessor was created by Federico Faggin for a calculator from the Japanese firm Busicom. This engineer, had been hired by Intel from Fairchild Semiconductor and was another great pioneer of the 70sas it had designed the first MOS integrated circuit and Silicon Gate Technology (SGT).

Faggin (and Intel) came up with a chipset (called MCS-4 short for “Micro Computer System”) comprised of four integrated circuits (ICs) that dramatically simplified the internal design of the calculator. He also designed three support chips: the ROM 4001, the RAM 4002, and the I / O Expander 4003.

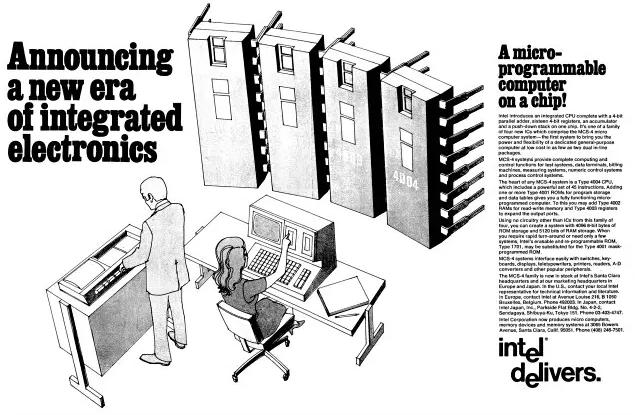

After the renegotiation of the contract with Busicom, Intel was free to sell the MCS-4 chipset to other manufacturers and this occurred on November 15, 1971 that we celebrate today and which was announced at the time in Electronic News magazine. The ad promised «a new era of electronics»And it was really one of the very few times where the publicity fell short for what came later.

Intel 4004 and Moore’s Law

The development of this chip for the Busicom calculator was key to the great personal computer revolution. Until then, the central processing units that we know by the acronym CPUs consisted of one or more circuit boards packed with integrated circuits and discrete electronic components. Faggin and Intel’s great innovation was compressing these components into a piece of silicon smaller than a fingernail.

Just a year later, Intel released a revised version, the 4040, but it was the 8080 used in the Altair 8800 that truly enabled the era of the personal computer. His conception was developed a few years ago in Moore’s Law promulgated by Intel co-founder, Gordon E. Moore, where he claimed that the number of transistors per unit area in integrated circuits would double every year. Ten years later he revised his claim by extending the transistor number doubling statement to two years.

Today the debate continues about the impossibility of fulfilling it eternally and is that Moore himself clarified that it had an expiration date. Although Intel has recently promised in the hands of its new CEO, Pat Gelsinger, that there is Moore’s Law for a while, it seems that only the replacement of silicon by new materials such as graphene will be able to maintain its main idea.

In any case, no one doubts its importance because defined the business strategy in the semiconductor industry, allowed the appearance of microprocessors such as the Intel 4004 and later the personal computer. Also the great dominance of Intel in personal computing with its x86 architecture that today companies like Apple (and others) intend to put in solfa also with silicon, but with another architecture like ARM.

And for the future (there are still decades for its arrival to consumption), without a doubt, the most attractive thing will be what comes from quantum computing.

Upgrade

Intel is also celebrating the 50th anniversary of the Intel® 4004, “the world’s first commercially available microprocessor. With its launch in 1971, the 4004 paved the way for modern computing thanks to microprocessors, the “brains” that make almost all modern technology possible, from the cloud to the edge. Microprocessors enable the convergence of technology superpowers – ubiquitous computing, ubiquitous connectivity, edge cloud infrastructure and artificial intelligence – and create a pace of innovation that is advancing faster than ever today. “

You have more information in the Intel press room. Also interesting is an opinion piece by Elizabeth Jones of the Intel Corporation Historian.