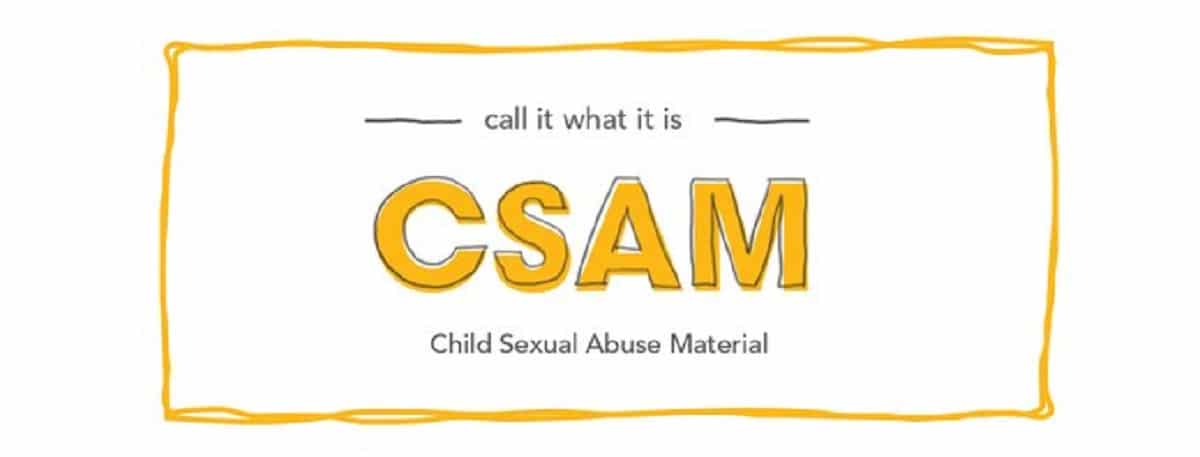

Apple announced three new features that target child protection on its devices. The idea itself is fantastic. However, there are those who have taken the opportunity to put their finger on the wound. He wonders if what Apple wants to do is not more of a covert surveillance relegating Mac privacy, iPad or iPhone in the background. To do this, those in charge of this new functionality have gone out of their way explaining how it works.

Erik Neuenschwander explains how his system works to raise child protection

We are talking about the new functionality that will come into force in the US in the fall and whose purpose is to child protection against sexual abuse. It focuses on the photos app, iMessage, Siri, and search. So it affects all Apple devices in which these applications intervene. We are therefore talking about Macs, which although it is not the device par excellence to take photos, it is to save and classify them, in addition to the existing synchronization through iCloud. The use of iMessage, Siri but especially the search command.

The detection system called CSAM it works mainly in iCloud Photos. A detection system called NeuralHash identifies and matches with the National Center for Missing & Exploited Children IDs to detect sexual abuse and similar content in iCloud photo libraries, but also communication security in iMessage.

A parent can activate this feature on their device for children under 13 years of age. It will alert when it detects that an image they are going to see is explicit. It will also affect Siri and the search system, as when a user tries to search for related terms via Siri and the search command.

Neuenschwander explains why Apple announced the Communication Security feature in iMessage along with the CSAM Detection feature in iCloud Photos:

As important as it is to identify the known CSAM collections where they are stored in Apple’s iCloud photo service, it is also important to try to move forward in that already horrible situation. It is also important to do things to intervene earlier when people start to enter this troublesome and harmful area. If there are already people trying to lead children into situations where abuse can occur. Message security and our interventions on Siri and search actually affect those parts of the process. So we are really trying to disrupt the cycles that lead to CSAM that could ultimately be detected by our system.

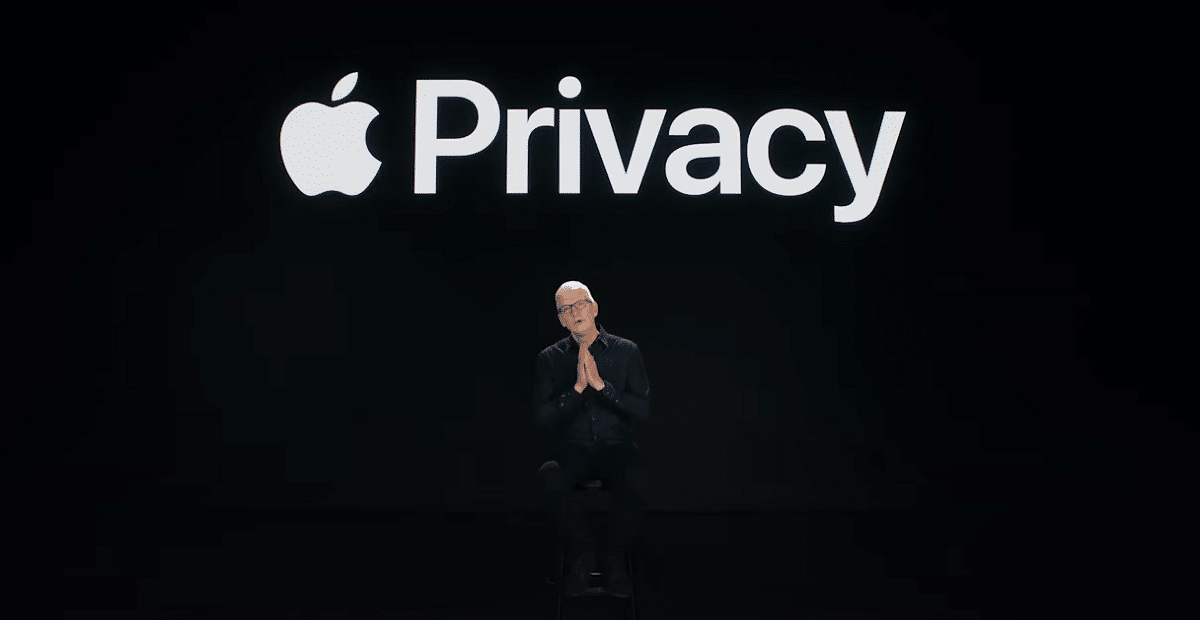

“Privacy will be intact for all who do not participate in illegal activities”

Difficult phrase. Neuenschwander has thus defined and defended Apple’s position against those who accuse the company of opening a back door to spy on its users. The security executive defends that security will exist and privacy will be maintained for those who do not comment on illegalities. Very good if that had no fault But who tells me that the system is not imperfect?

Do youApple should be trusted if a government tries to intervene in this new system?

Neuenschwander replies saying that in principle it is being launched only for iCloud accounts in the US, so local laws do not offer these types of capabilities to the federal government. For now, only residents of the United States will be subject to this scrutiny. But he does not answer and does not make it clear what will happen in the future when it is launched in other countries. Although that will take time, since it will depend on the laws of each of them. The conduct described in the criminal codes of each country differ in what is a crime, so the Apple algorithm should be adapted to each legal situation and that should not be easy, at all.

The most important thing in this topic is the following: iCloud is the key. If users are not using iCloud Photos, NeuralHash will not run and will not generate any prompts. CSAM discovery is a neural hash that is compared to a database of known CSAM hashes that are part of the operating system image. Nothing will work if iCloud Photos is not being used.

Controversial without a doubt. A good purpose but with some gaps Do you think that privacy is in question? Worth?