Everything has a why and a purpose and many times if we think about it we find things that we initially take for granted, actually if we think about it they don’t make sense from the point of view of pure logic. And it is that in the case of the graphics card, this should have always been part of the basic hardware and, therefore, not exist as a complementary element.

The first graphics card was due to a limitation

At that time, there was no image buffer, that is, the entire frame was not generated in a memory and then transmitted to the screen due to the fact that RAM was extremely expensive, there were no dual-port memories either, so in the period in which the video system was accessing the memory to draw on the screen to draw it with the electron beam the system could not access the memory. What was the problem? Well, if we take into account the total time of all this, we will realize that it leaves very little time for the rest of the functions, such as executing the program.

When Steve Wozniac designed the Apple II, he realized that it accessed RAM at the same frequency as the processor itself, so he decided to cheat, which was to use memory at twice the clock speed of the central processor, which which allowed one clock cycle to be allocated to the CPU and the other cycle to the video system. Thanks to it, in text mode he could draw up to 40 characters per scan line.

The problem came when it was discovered that to work in text mode, this was too little, so 2 MHz video hardware was required and this completely broke the timing between the CPU and RAM. The solution? Using one of the expansion ports to add a graphics card that would allow 80 columns of text. And yes, we know that today talking about it is primitive, but its origin was not to move video games, as many believe.

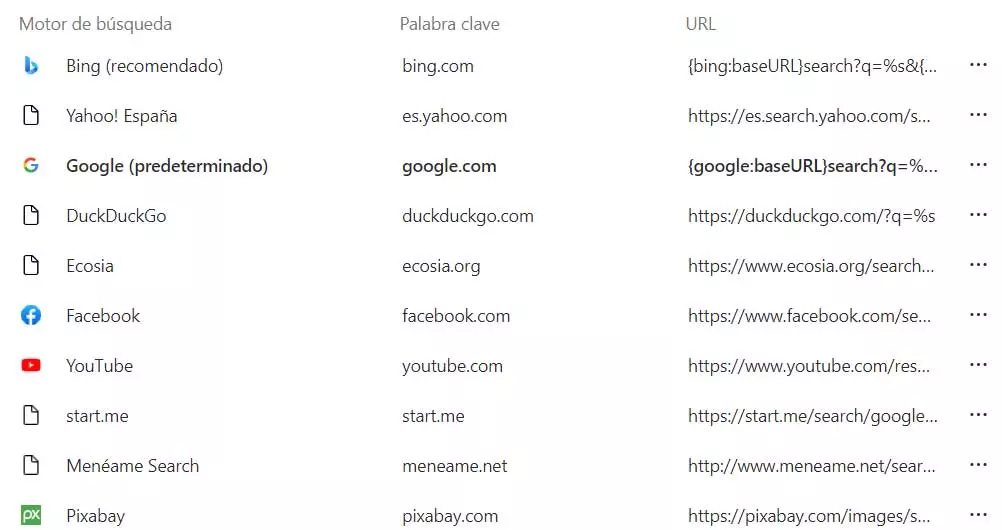

80 columns vs. 40 columns

At the time, one way to differentiate if a system was intended for business use or for playing video games was by supporting 80-column video mode or not. On the other hand, there were no graphics chips yet that did both functions because such a level of integration had not been reached, so the designers of any computer of the time had to decide whether to direct it towards the home or professional market.

And what about the graphics card in the first IBM PC?

We have already seen that the first home computers were not the IBM PC, however, it was the one that popularized the x86 instruction and register set that we still use today. Since it was not a widely used processor, all the software had to be converted, but it was chosen by IBM because it was a better CPU than the 8080 in architecture and one thing it stood out for was its 20-bit addressing. That is, it allowed adding up to 1 MB of RAM.

Initially and due to costs, there were no RAM memory modules as we know them now and the system memory was soldered. The solution? Add expansion ports that allow expansion. If we add to this that the Intel CPU did not have pins for peripherals, but instead communicates with them through the system RAM, well, with this we already have the ability to connect expansion cards. Let’s not forget that the IBM 5150 was not intended for the business market and not to be sold in homes, but for the corporate market.

The plurality of monitors also influenced

Now, IBM could have chosen to put all the extra circuitry on the board of the first PC, however, at that time there was no standard for what type of monitors users would use. So the solution ended up being to sell the same base machine with options for different graphics cards according to the needs of each user.

This decision allowed them to save on manufacturing costs, but in return allowed many PC and PC XT users to upgrade their graphics card to an EGA. Historical moment in which the current market for computer graphics cards began and in which the division according to the type of market vanished, unifying the market and turning the PC into the Swiss army knife that it continues to be today.