In reality, no matter where we look in the industry, the architectures, although different, are increasingly focused on more specific sectors, yes, but they have the same base, the same problems, the same advantages. That is why we are going to see the main hardware bottlenecks and their development to understand where we are going.

Different components, different limitations, performance and latencies

Logically, the limitations or bottlenecks are different in each component, but they have something in common in all cases with greater or lesser importance: latency. In some cases it is key, in others it goes on tiptoe, but it will undoubtedly mark the performance in the coming years. In addition, it is indistinct for PC or console, where, saving their particularities, they are also affected.

To give us an idea of what latency matters, we have this chart that somehow became famous at the time and that illustrates quite well what it means in various components, nanoseconds, milliseconds and seconds, compared to time as we normally perceive as humans.

As can be seen in a processor to 3GHz a delay in clock cycles of only 0.3 ns would imply a perception of 1 second for us. Accessing the L3, which is on average about 12 ns depending on the architecture of the processor, represents 43 seconds of our life.

| Action | average latency | Completion time for one person |

|---|---|---|

| Time of a clock cycle at 3 GHz | 0.3ns | 1 second |

| Access time to the L1 cache of a CPU | 0.9ns | 3 seconds |

| CPU L2 cache access time | 2.8ns | 9 seconds |

| L3 access time | 12.9ns | 43 seconds |

| RAM access time | Between 70 to 100 ns | Between 3.5 minutes and 5.5 minutes |

| I/O timing of an NVMe SSD | Between 7 to 150 picoseconds | Between 2 hours and 2 days |

| HDD input and output time | Between 1 and 10ms | Between 11 days and 4 months |

| Internet, access time from San Francisco to New York | 40ms | 1.2 years |

| Internet time between San Francisco to Australia | 183ms | 6 years |

| Restarting virtualization of an OS | 4 seconds | 127 years |

| Restart a virtualization | 40 seconds | 1200 years |

| Reboot a physical system | 90 seconds | 3 millennia |

If we extrapolate this to the RAM and go up to 100 ns, it would be equivalent to traveling a distance to our destination of 5.5 minutes. Perhaps the most striking are the latency times of the Internet, something more common that anyone can understand, and that is that if we have 40 milliseconds between San Francisco and New York it would be equivalent to losing 1.2 years of our life and if we change the destination to Australia nothing less than 6 years.

Therefore, latency is very important on a PC or on a console, where, as we see, each generation has been fighting for more than 40 years to reduce it in order to increase performance per instruction and cycle. Having said that, we are going to see how it affects the main components and if there are improvements in this aspect in the short or long term.

Processor latency

It is the component that suffers the most by far. Former AMD Chief Architect Jim Keller defined it brilliantly at the time:

Performance limits are predictability of instructions and data

That is, if you can predict what resources are needed for each instruction and data, then you can manage them better and, therefore, generate less time between them or increase performance.

Again the latency here and it is that the problem was seen first by AMD and now Intel will solve it in part in Raptor Lake: increase the size of the caches to mitigate access times and the passage of instructions and data in the cache hierarchy .

What is tried is not to access the RAM, or limit the access cycles as much as possible. AMD already did it with Ryzen and Zen 2 to Zen 3, Intel will do it now in its next architecture.

RAM and GDDR6 memory

It is possibly the most key aspect in these two components. RAM memory is always in question due to latency, but what is really demanded is more bandwidth, more frequency, more speed without compromising ratios with timings. DDR5 has unleashed this for good and although we will not notice it as much on PCs as on servers, it is a necessary technology for the sector in general.

As for GDDR6, latency is not as important as the resulting bandwidth, since the computing capacity of GPUs is increasing and they need to supply data from their associated memories. Therefore, latency is secondary, although it is far from negligible.

There are also no improvements in sight compared to GDDR6X as such, where speed and frequency are being increased while maintaining latency at the same clock cycles.

SSD, performance and its latency on PC

They are the least dependent on this factor, but latency is necessary for high-bandwidth random operations. Controllers need to exchange more and more data with cells and therefore performance cannot be lost with clock cycles impacting raw bandwidth based on IOPS.

In summary, it is the processor cores that are most affected by their cache, something that will happen in not too long with the GPUs, since they are also increasing their size and exporting them out of the Shaders groups as AMD has done with Infinity Fabric and Infinity Cache, where precisely they intend not to depend on a higher speed of GDDR on GPUs and at the same time not take up space on CU units.

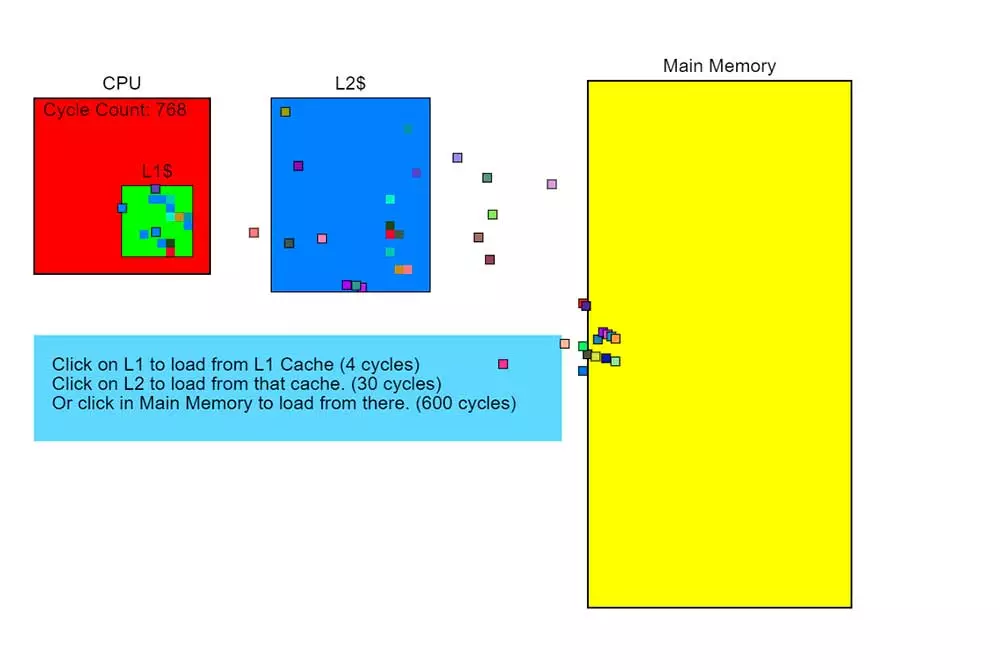

There is a very curious animation that just by clicking on the different elements that are represented, the importance of the latency of the system in its different components is perfectly understood. You just have to access a website and start clicking to see the animation of how the elements work,

It’s especially interesting when we keep clicking on the system memory, then we go to L2 and then L1 to see the organization and the flow of performance and latency between them, really curious and instructive. Therefore, the passage of AMD with Zen 2 and Zen 3 has been crucial to be able to face Intel at the cost of a very large space in the DIE, something that Intel now has to replicate and that it should not do before due to its lithographic process. .

Temperature, more serious than latency and performance on PC?

Logically, a determining factor in the performance of any chip is temperature. The problem is that this is inherent to the technology, since any chip that has a voltage will have a higher or lower temperature by simple operation. The greater the complexity of the chip, the greater the number of cores and units it has, the more frequency and logically the more voltage it needs, ergo it will heat up more.

As expected, this is somewhat ambiguous, since it will always be a limiting factor, but at the same time heat is not a technological factor and we simply have to live with it as we have been doing since the creation of the first chip.

In short, the latency in PC and the performance of the different components is the key factor that is limiting and will limit this (performance) more than any other, since it is worth nothing to have a higher general speed if the access time and the transfer of information between components is increasingly delayed.