One of the fundamental pieces in every GPU is the texture unit, which is responsible for placing an image in the triangle of each scene, a process that we call texturing, but also what we call texture interpolation.

How does texture interpolation work?

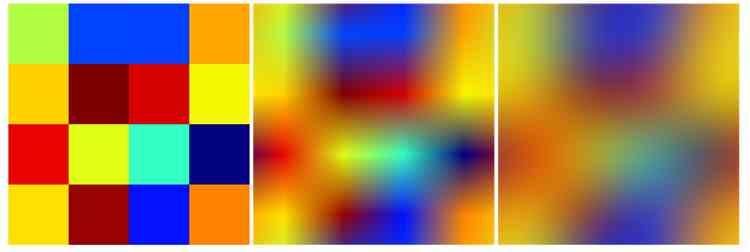

Texture interpolation is a function that extends to texture mapping, this consists of creating color gradients from one pixel to another in order to eliminate the effect of pixelation in the scene. The simplest being the filter or bilinear interpolation, which consists of taking the 4 closest pixels to each pixel to perform the interpolation calculation.

Today much more complex interpolation systems are used than the bilinear filter, which make use of a greater number of pixels per sample. As is the case with the anisotropic filter at various levels that can use 8, 16 and even 32 samples per pixel in order to achieve higher image quality.

However, despite the existence of more complex texture interpolation algorithms, the vast majority of graphic hardware is designed for the use of the bilinear filter, which is the cheapest and easiest to implement of all at the hardware level and achieves results. that today we could consider free as far as computational cost is concerned.

Texture interpolation and data cache

When the texture unit performs the polygon tutoring process, what it does is look for the color value stored in the texture in memory, applies it to the corresponding pixel, performs the corresponding calculations with the specified shader or shaders and the final result sends it to the ROPS so that it is written to the image buffer.

In the middle of this process the interpolation or filtering of textures is carried out, but because it is a repetitive and recursive task. This is done by what we call the texture unit, which is in charge of carrying out the different filters. For this, it requires a high bandwidth that increases with the number of samples required per pixel, at least four times higher for each texel, which is what is required to perform the bilinear filter.

The texture units in today’s GPUs are grouped four by four in each shader unit or Compute Unit. This means that 16 32-bit accesses are required per clock cycle per shader unit. That is why the data cache of the same unit and its bandwidth are used to perform texture filtering.

At the same time, if it is required to use a higher precision texture filter since each texture unit takes only 4 samples per clock cycle, then it is necessary to use a greater number of clock cycles to perform much more complex texture interpolation algorithms, thereby reducing the texturing rate.

The computational cost of texture interpolation

Today shader units are capable of performing an enormous amount of calculations per clock cycle, not in vain are they capable of performing several TFLOPS, the FLOPS being floating point operations and the T corresponding to the prefix Tera, which refers to 10 ^ 12 operations, so the computing power of the GPUs has increased enormously.

Today, the interpolation of textures could be carried out without problems using a shader program inside the GPU, which in theory would completely save the inclusion of texture units. Why is this not done? Well, due to the fact that to compensate for the loss of said texture units we would need to increase the power of the computing units in charge of executing the shaders in a way that would be much more expensive. That is, we would need to place more transistors than we would have saved, hence the texture units continue inside the GPUs after more than twenty years since the first 3D cards and they will not disappear.

It must be taken into account that the texture interpolation is carried out in a way for all the texels that are processed in the scene, which is a massive amount and especially if we are talking about high resolutions such as 1440P or 4K. So it is not profitable to remove them from the hardware and all graphics hardware without them is going to have major performance problems if it dispenses with them, not to forget that all games and applications already take their existence for granted.

Current limitations to the texture unit

Once we have explained its usefulness, we have to take into account what are the limitations regarding the unit of textures for interpolation. The usual texture format is RGBA8888 where each of the components has an 8-bit precision and therefore has 256 values per color component.

This greatly facilitates the implementation of hardware-level texture interpolation. Since although each texture unit takes the 32 bits of each pixel internally from the data cache, each of the four component components is processed separately, rather than together.

The problems with this implementation? When the texture unit performs the interpolation of each of the 4 components, it does so using only 256 values, which despite facilitating the hardware-level implementation of the texture interpolation cuts the precision of the data that can be obtained, for what we do not end up with the ideal result for the interpolation, but an approximation to it.

This lack of precision in combination with the fact of using few samples per pixel causes that many times in the textures of the games image artifacts are generated that poison the final quality of the scene. The best solution? Using much more complex interpolation methods such as bicubic interpolation, but this means taking the hardware to much higher levels of complexity than there is now, as this would require four times the bandwidth with the data cache.