It seems that, at least for IBM, the future of computing is not about increasing more and more the number of processor cores, but rather the opposite; With this new generation of CPU, the manufacturer has reduced the number of cores, but in return has substantially improved other aspects such as doubling the amount of L3 cache (256 MB compared to 128 MB of its predecessor), or by introducing separate cores dedicated exclusively to Artificial intelligence.

This is IBM Telum Z, the future of computing according to IBM

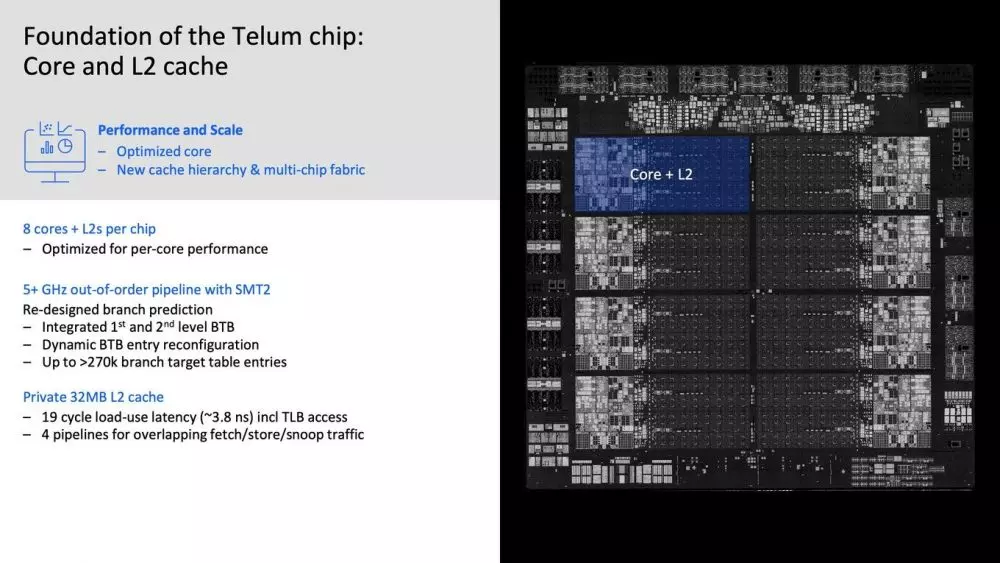

The right part of the slide that we have placed above these lines makes it quite clear that the internal composition of the die has a total of 8 physical cores, which in this case are capable of generating 2 process threads per core thanks to the technology IBM SMT2 (such as Intel HyperThreading or AMD SMT) for a total of 16 threads.

A peculiarity of this processor is that all its cores are out of order, and therefore are designed to execute instructions avoiding execution stops and thus expanding the average number of instructions that it is capable of solving per every clock cycle. The design of this processor is therefore intended for real-time applications that require instant response from the processor, and for this reason IBM has focused on maximize single threaded performance.

For this purpose, IBM has integrated 32 MB of L2 cache which is initially exclusively available for each CPU core (4 MB per core) and, as we said before, 256 MB of L3 cache. Comparatively, an Intel Core i7-10700K processor has only 20 MB of what Intel calls Smart Cache (L2 + L3), so as you can see we are facing a huge amount of cache memory. Additionally, IBM has provided four pipelines that communicate with the cores in just 19 clock cycles (3.8 ns), so working with the cache should be blazingly fast.

Finally, it should be mentioned that this IBM processor is manufactured by Samsung with its node process at 7 nm; It has 22,500 million transistors in an area of 530 mm² and, be careful, it is built in 17 layers. IBM has not provided any further details on this, but we will stay tuned because it is quite interesting.

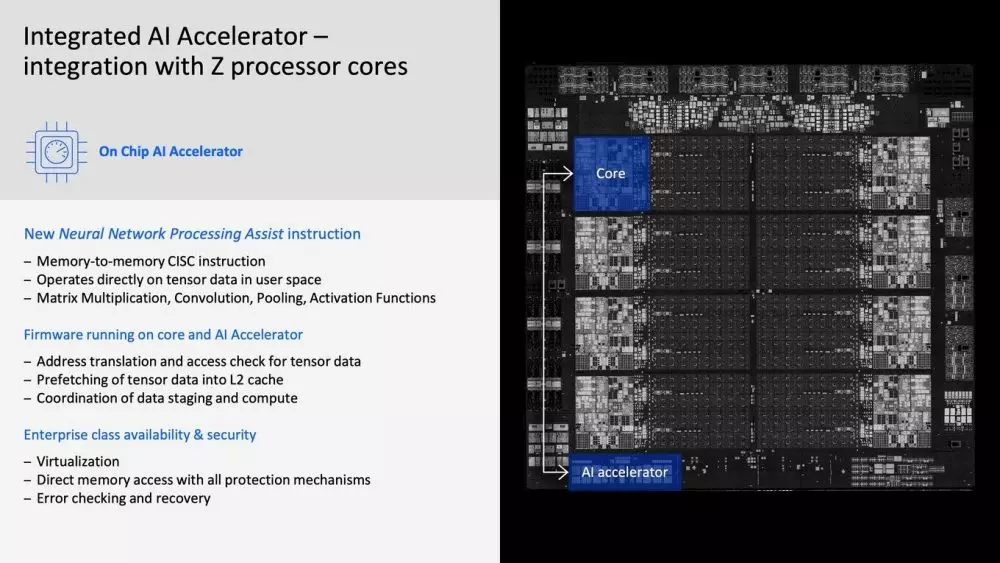

Exclusive cores for Artificial Intelligence applications

One of the peculiarities that makes this IBM Z Telum processor very interesting is the fact that they have integrated specific cores for Artificial Intelligence (That is, with respect to the previous generation, the number of cores has been reduced from 12 to 8 but, in addition to the improvements that we have already talked about, it actually has more cores by having these with specific performance).

According to IBM, these cores achieve 6 TFLOPS throughput in FP16 calculations, and it is worth mentioning that it literally treats them as AI accelerators. These cores have direct access to the cores’ L2 cache, so data can be read at speeds of 120GB / s and written at 80GB / s; According to the manufacturer, this data can be pre-processed before being available in the accelerator for AI itself, increasing the bandwidth to 600 GB / s, a real outrage to be able to process the data almost instantly.

Of course, although before we have compared the cache of this IBM processor with one of consumer Intel, it is not designed for consumer applications, much less but for real-time applications such as finance, stock market, insurance, medicine, infrastructure, etc. . It is further assumed that these processors are designed to be integrated into multi-chip systems, and according to IBM they can even function in a dual array with racks of 8 to 32 processors.